AI 3D Generation: From Prototype to Production

Today, there is almost no digital field where 3D assets haven’t found their place: CGI, games, VR/AR, physics simulations, fashion design, marketplace product renders – you name it. Over the past decades, this spread into everyday life and transformed the 3D modeler role from a programmer in an engineering lab to an artist using specialized software.

With the controversial, yet revolutionary AI advancements in generating text, images, video, and music, it seems only natural that 3D generation should be product-ready out of the box, requiring at most a thin wrapper over an API call. But, here’s the twist: that is simply not the case.

So, how big is the gap between expectations and reality in AI-based 3D asset generation exactly, and is there a way to breach it right now?

|

|

| Expectation | Real Inference |

What Makes a 3D Asset Usable? Geometry, Materials, and Metadata

Let’s start from afar. The concept of 3D assets didn’t spring from computer science alone. The first mentions in a modern context date back to the Bézier curves that French automotive engineers came up with in the 1960s, followed by… Okay, stop. Maybe this is too far away from what we need.

What we really need is to understand how to represent the spatial position of an object, as well as its relevant properties, conveniently. Akin to 2D image raster and vector formats, there are two approaches for storing spatial information.

Meshes are the 3D equivalent of the raster format. We describe objects with determined blocks, but instead of a pixel, the minimal building block here is a polygon in a 3D coordinate system, usually a triangle, though sometimes a 4-corner shape (quad) is more convenient. A single point of a mesh is called a vertex, a connection between two vertices is an edge, and a group of connections that form a closed polygon is a face. Simple enough. Meshes are used when artistic control, organic shapes, or real-time performance is required. Few of the typical formats for them are OBJ, STL, and FBX.

On the other hand, analogous to vector 2D images, we have CAD-like formats. These use precise, parametric, mathematical definitions (NURBS), which are the evolution of the Bézier curves we mentioned earlier. Use CAD when dimensions and manufacturing precision matter (STEP, IGES, SolidWorks). Native CAD generation and AI mesh-to-CAD converters aren’t even close to production-ready yet, so we’ll leave them off the table for now.

We covered geometry, but it’s merely the skeleton; to become a functional 3D Asset, it must be fleshed out with properties. Visual realism standard is Physically Based Rendering (PBR), which defines metalness and roughness of the material, not just color, while interactivity demands physical data like collision bounds and rigging. Without this metadata, a generated model is just a static mathematical shell, not a usable item for a game engine.

This “packaging” step is the true bottleneck. While AI can easily hallucinate an acceptable raw shape, it struggles to organize it into complex production formats (like FBX or USD) that bundle geometry, materials, and hierarchy together. The gap between “Expectation” and “Reality” lies here: users want a plug-and-play asset, but AI currently delivers only the raw, unpolished geometry.

|

|

| CAD | Mesh |

How AI 3D Generation Works: From a Photo to a Mesh

A “3D generation model” is rarely a single model; it is usually a complex AI system comprising several specialized networks and pre/post-processing scripts disguised under a shared name. It takes a simple input (like a text prompt or an image) and attempts to return usable geometry and textures. To understand what is actually going on under the hood, we have to look at shape and appearance separately, as they are traditionally treated as sequential tasks. We’ll look at this from the “How it started vs. How it’s going” angle.

Brief historical overview

Initially, acquiring 3D shapes was a pure engineering challenge. Techniques like Structure from Motion (SfM) and Multi-View Stereo (MVS) relied on handcrafted feature detectors (like SIFT, Lowe 2004) to mathematically triangulate points from hundreds of high-overlap images.

The Deep Learning Era shifted this approach. 3D-GANs (Wu et al., 2016) attempted to map 2D images directly to 3D voxel grids, but they hit a “cubic memory wall”: doubling the resolution increased memory usage by eight times. A workaround occurred along with Neural Radiance Fields (NeRF) in 2020: by focusing on novel view synthesis, the network learns to “picture” the object from any direction rather than storing its physical volume. With these generated views, it boils down to classic reconstruction tools mentioned earlier to create a mesh, removing the need for the network to handle complex 3D geometry directly. Our separate NeRF in 2023: Theory and Practice guide covers the full training and rendering workflow in NeRFStudio, including the limitations that pushed the field toward generative methods.

All these experiments across a couple of decades laid the groundwork, but practical 3D generation required a fundamental shift in approach. The generative branch began sprouting with DreamFusion (Poole et al., 2022). It introduced Score Distillation Sampling (SDS), allowing 2D diffusion models (like Stable Diffusion) to “judge” and optimize a 3D shape until it looked correct from every angle. This was “dreaming” 3D from 2D priors and was painfully slow, but it was the final missing piece that allowed for revolutionary progress.

So, by 2022, the paradigm itself has changed. 3D generation was no longer the deterministic triangulation problem of the SfM era, which demanded lots of multi-view data. Instead, the neural networks were taught some degree of 3D space awareness, even if via indirect means. With these semantic priors, as little as a single image can be turned into a mesh. Unfortunately, the quality of those generations was far from being useful.

|

| Unsettling DreamFusion generations |

Path to The Modern Generation

With all priors set up, a major practical shift followed in 2023 with the rise of multi-view diffusion. As the 2D generation already showed great results, researchers tried “forcing” those models to understand 3D. Zero-1-to-3 pioneered this by fine-tuning Stable Diffusion on camera angles and 3D data, while One-2-3-45++ later fleshed out the concept into a complete 3D mesh generation pipeline.

|

| One-2-3-45++ generations |

Building on this in late 2023, the LRM architecture pioneered a family of Large Reconstruction Models, enabling near-instant, feed-forward generation. In this workflow, a 2D diffusion model first generates 4-6 consistent views from a single image, which LRM “stitches” into a Triplane-NeRF in about five seconds. The mesh still needs to be extracted via an algorithm like Marching Cubes, but the overall speed-up was impressive. While models like InstantMesh (2024), TripoSR (2024), and Hunyuan3D v1.0 (2024) refined this process, they couldn’t overcome the inherent flaws of Triplanes. Because Triplanes rely on 2D axis-aligned projections, they struggle with complex “concave” geometry or occlusions. Furthermore, if the initial views lack perfect pixel-alignment, the 3D output collapses into a blurry mess. Finally, Triplanes suffer from quadratic memory scaling: the same problem as earlier approaches faced with a 3D voxel grid, but less severe. All these capped the level of detail these models can achieve and their further development.

|

| InstantMesh generations |

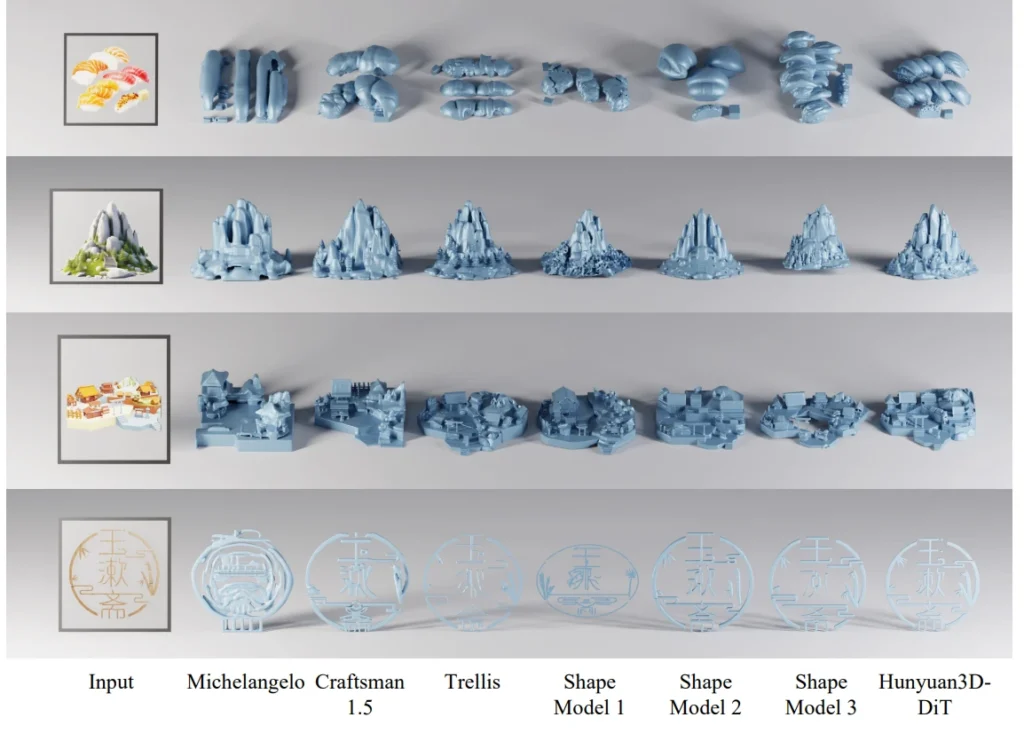

This path of trial and error leads to the current state-of-the-art: the Native 3D Era (Late 2024-Present). These models generate assets directly in high-dimensional latent space, skipping the “stitching” phase and the “memory wall” entirely, much like how modern 2D models create images. Two families currently lead the field. The TRELLIS Family prioritizes topological flexibility; its latest version utilizes compact structured latents (SLAT) to encode geometry and PBR materials into a sparse grid. By being “field-free” (moving away from Signed Distance Fields (SDFs), which earlier models in this class used), it can represent complex, non-manifold geometry like open clothing or thin leaves with ease. Meanwhile, the Hunyuan3D Family focuses on massive scale and precision. Hunyuan3D v3.0 leverages a 10-billion-parameter (vs nearly 4 in the latest Trellis) Diffusion Transformer (DiT) to treat 3D generation like a language problem, using a hierarchical “sculpting” approach. It generates a global coarse shape first and progressively refines it to ultra-high resolutions, effectively eliminating the surface noise and artifacts that plagued earlier generations.

|

| Hunyuan3D v2.0 shapes comparison with analogs |

Texture & Material Generation

Once the shape is solidified, it needs to be colored. Historically, this meant projecting 2D images onto a mesh (Texture Mapping). Early models like TRELLIS 1 and Hunyuan 2.0 treated this as a separate, decoupled task, which often resulted in a “plastic” look and noticeable texture drift. Modern versions have shifted toward Native PBR Generation, predicting Albedo, Metalness, and Roughness simultaneously by analyzing both the input image and local geometric details like curvature or sharpness.

TRELLIS 2 utilizes Structured Latents to predict material parameters for specific 3D points directly. By generating material tokens that are perfectly aligned with geometry tokens within the same grid, the materials are “baked in.” This provides superior localization, preventing “bleeding” between different surfaces (think about keeping a gemstone’s high-gloss shine strictly separate from a matte metal ring).

Hunyuan 2.1/3.0 uses a Sequence-based Latent instead of a fixed grid, so they came up with Mesh-Conditioned Multi-View Diffusion. It essentially “paints” the asset by observing the geometry from multiple angles. To solve the localization problem, it injects 3D coordinates into the 2D process using Normal Maps, CCM, and 3D-Aware RoPE. This ensures the model recognizes a single 3D point across different views. They also keep color and material data in parallel branches that stay synchronized through shared attention.

Hunyuan3D vs TRELLIS: Comparing the SOTA AI 3D Generation Models

A model’s true value often depends on its adaptability. While proprietary systems often work well out of the box, you have no means to customize them for niche requirements. Having identified the SOTA comes down to two leading “Native 3D” families, the next question is accessibility. How open are these systems? TRELLIS is completely open-source. Both training and inference code are available, making it very developer-friendly. On the contrary, Hunyuan3D v3.0 is currently closed and available only via API. Its predecessor, v2.1, has released weights and code, but the training script requires significant tweaking to work reliably (or at least work at all), so we can consider it an “open-weights” model at best.

|

|

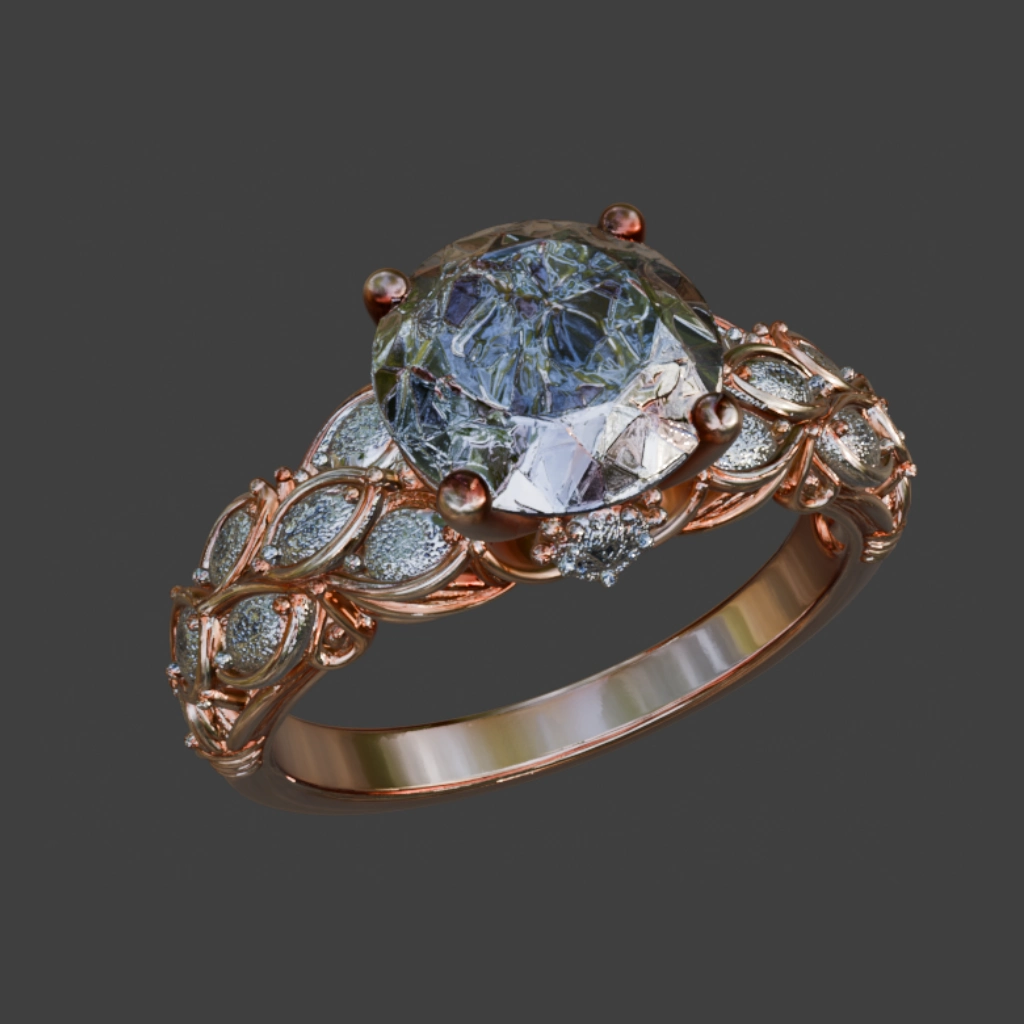

| Input image | Generated 3D still |

Simple object generation with Hunyuan v2.1 (Utah Teapot)

When we put standard shapes into these models, they come out nearly perfect, but such simple results barely provide additional value. The real test is where this technology meets industry-specific requirements, and currently, the winner is determined by how much “mess” your pipeline can tolerate. Advertising is the immediate sweet spot; since the goal is a beautiful view rather than perfect polygons, marketers can use heavy, unoptimized AI meshes for offline renders. E-commerce and entertainment sit in the middle, successfully using AI as a “prop factory” for rigid objects and background fillers where complex animation isn’t required. However, sectors such as gaming and high-end fashion remain the biggest laggards. Due to noisy topology and the absence of true physics simulation capabilities, AI still struggles to generate characters that deform correctly or dresses that realistically flow in the wind, leaving these areas stuck in a “prototype-only” phase until the next breakthrough. To back this up, let’s consider two industry examples: Video generation went through this same transition roughly two years earlier. For context on where 2D generation stands now, our AI video generation tools overview covers the current landscape; the trajectory in both fields is nearly identical.

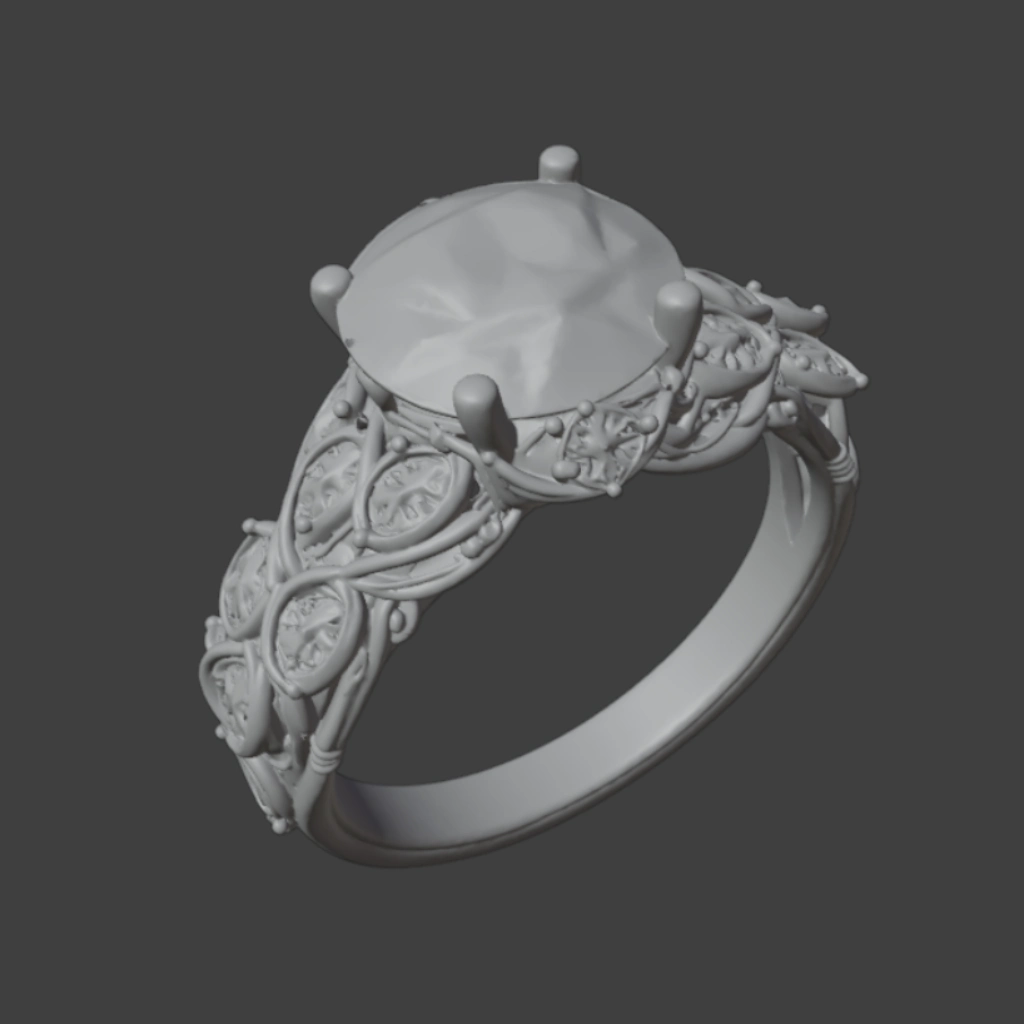

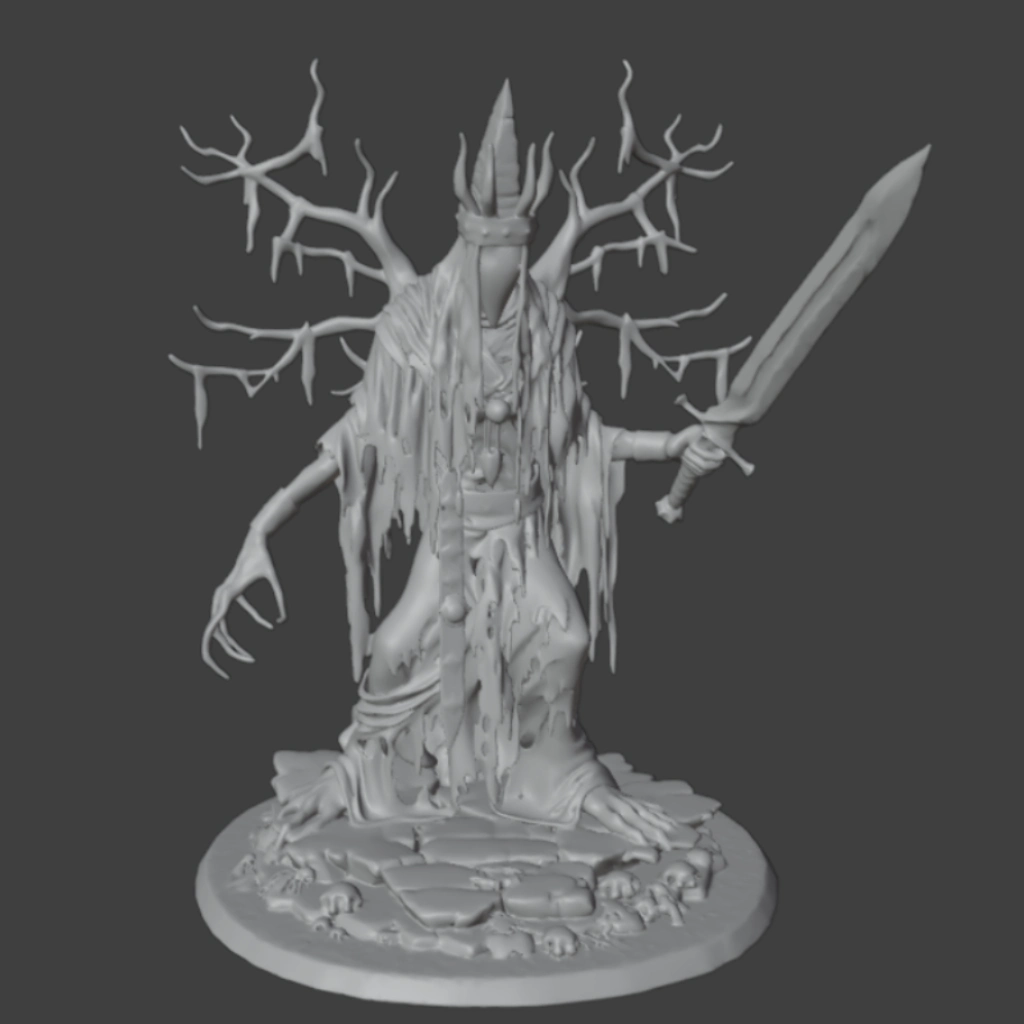

| EXAMPLE 1 | EXAMPLE 2 | |

| INDUSTRY | Advertising/E-commerce

(Jewelry) |

Manufacturing

(3D printing) |

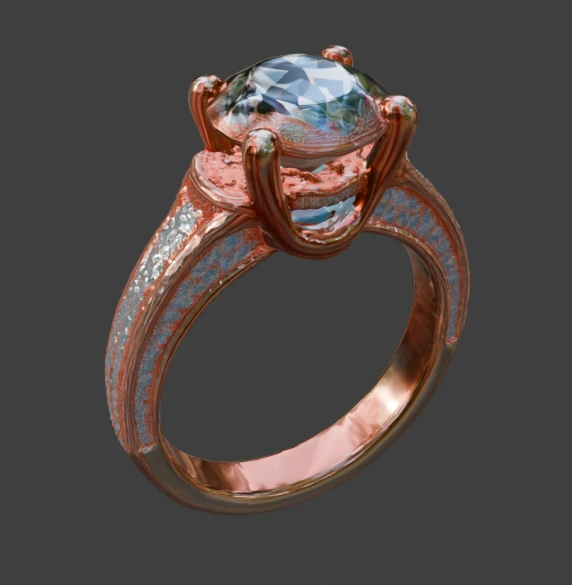

| INPUT |

A Gemstone Ring

|

A Tabletop Monster Miniature |

| CHALLENGES |

Requires sharp, hard-surface precision with reflective textures.

|

Features thin parts and a complex organic silhouette; |

| EXPECTED RESULT |

Model that represents input image 1 to 1 without hallucinations, with smooth surfaces and geometrically correct gems.

|

The model has a distinct base, thin details represented well enough to be printable, and the whole mesh is watertight. |

|

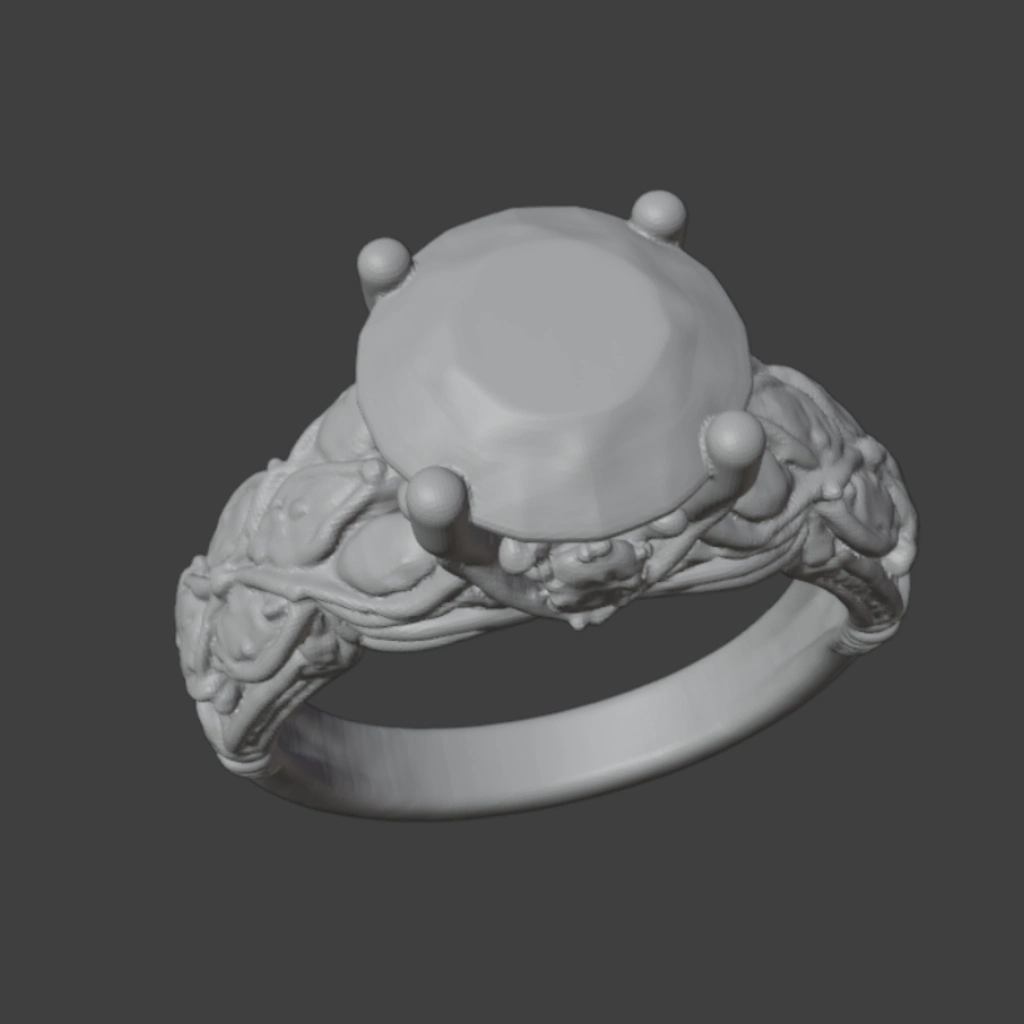

Advertising/E-commerce (Jewelry) |

|

|

|

| Input | Trellis v2 |

|

|

|

|

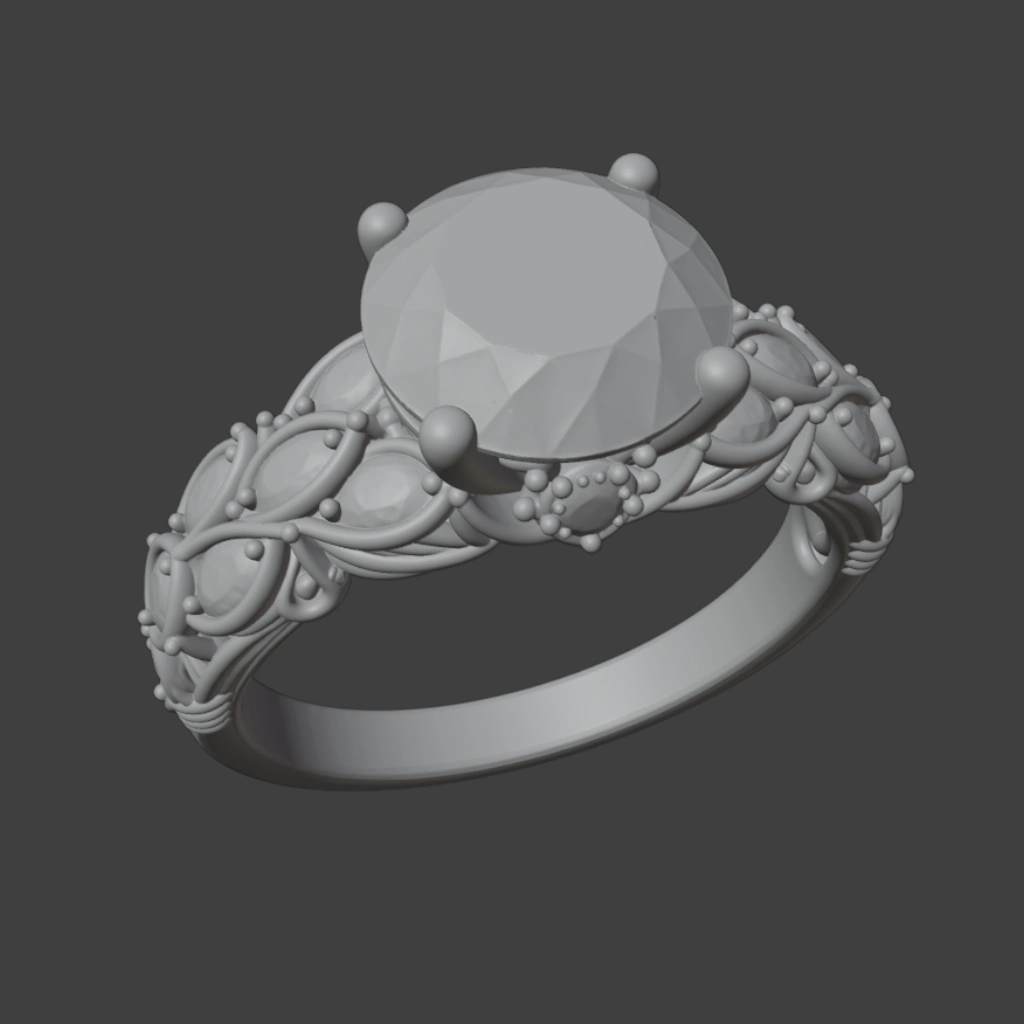

Hunyuan v2.1 |

Hunyuan v3.0 |

|

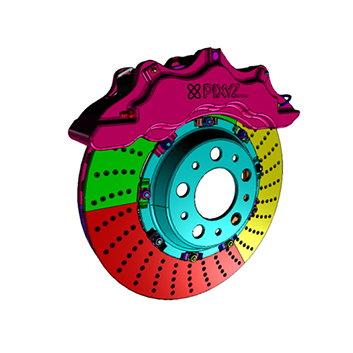

Manufacturing (3D printing) |

|

|

|

| Input | Trellis v2 |

|

|

|

|

Hunyuan v2.1 |

Hunyuan v3.0 |

At first glance, all models appear to perform well. There is an obvious dependency: the larger the model, the more satisfactory the results seem (and inference time increases). However, this raises the question of whether this trend truly holds.

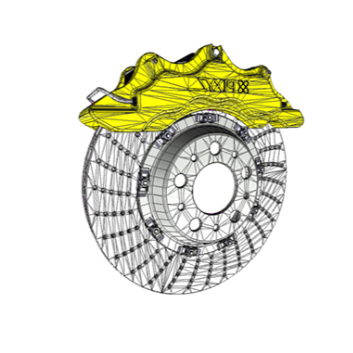

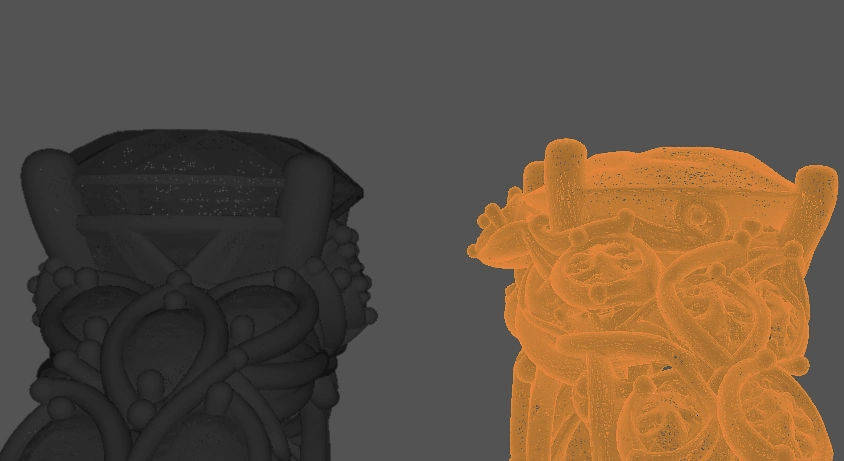

|

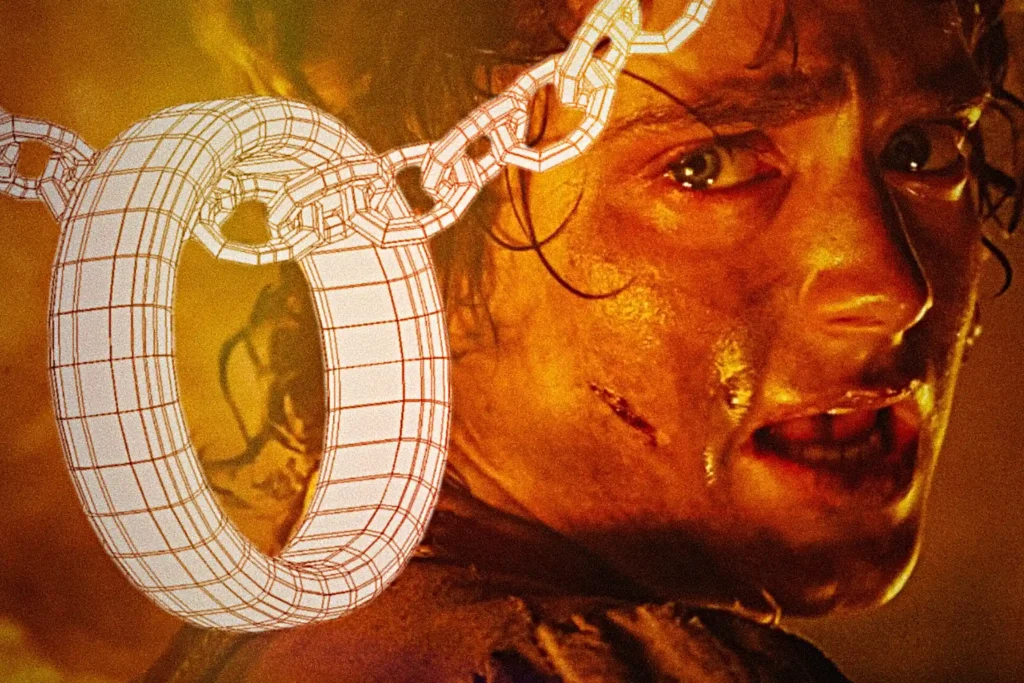

| Hunyuan v3.0 (left) and Trellis 2 (Right) generations’ wireframes |

Under a digital microscope, the wireframe’s clean surface dissolves into millions of chaotic triangles. When a human models a mesh, components like gems are built with minimal polygons and a logical structure, making minor edits as simple as moving a few vertices. You can also predict a skilled modeler’s quality based on their portfolio. Generative AI is different. Most algorithms extract meshes from latents with extremely high-density topology, making manual sculpting or editing a nightmare. It’s often faster to remodel the entire sample than to fix the topology by hand. Furthermore, performance is inconsistent; while models handle familiar shapes well, they often struggle with specific details or views, like Trellis with gems.

|

|

|

Trellis v2

|

|

|

|

| Hunuan v3.0 | |

|

| Close-up of Hunyuan v3.0 textures |

On to textures. While Hunyuan v3.0 wins on fidelity, Trellis v2 is better at keeping materials from bleeding into one another. However, both models struggle with PBR. Shadows and highlights are incorrectly painted onto the albedo rather than being handled by metalness and roughness channels. Because distinct materials are merged into a single layer, reusability and editing are nearly impossible. Often, what appears to be complex geometry is actually a “hallucinated” flat texture. For instance, Hunyuan v3.0 makes small diamonds look more like apricot seeds.

So, what are we left with?

The current state of 3D AI follows an 80-20 rule. For familiar objects and clean camera angles, you can expect nearly perfect results. However, that final 20% of accuracy is incredibly difficult to bridge. Unlike 2D AI, where you can easily use Photoshop or Inpainting, 3D models produce assets with such high-density, “messy” topology that turn polishing into a nightmare.

While all this may sound critical, the field has, in fact, skyrocketed recently. The leap from DreamFusion to models like Hunyuan v3 is massive, with new major breakthroughs still dropping every few months. We can get immense value from these tools right now, but they aren’t “plug and play.” They require specific tailoring, heavy post-processing, and cleanup to be production-ready. If you don’t think through your workflow, the costs for computing and polishing labor will add up quickly.

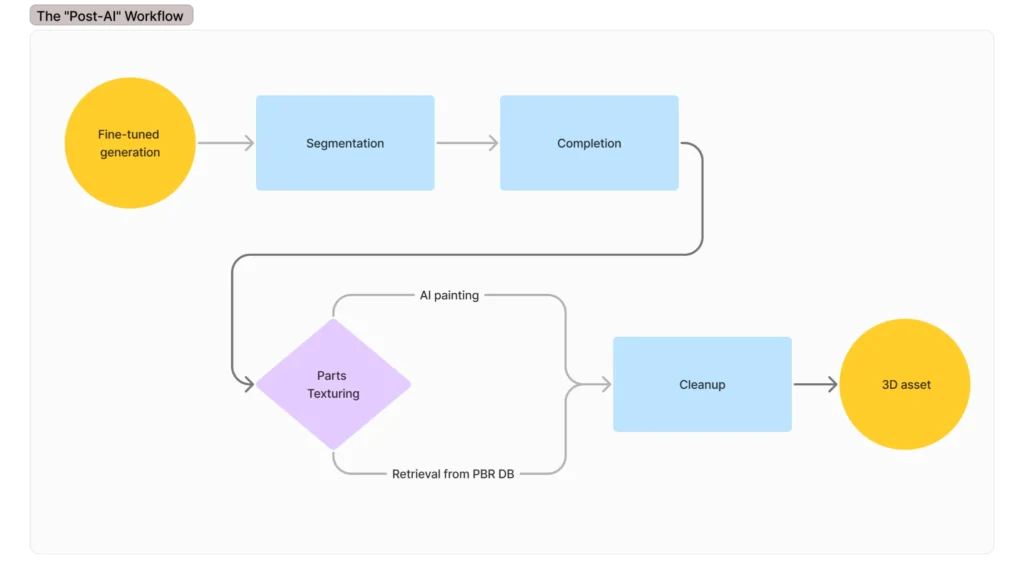

Custom AI 3D Generation Pipelines: How IT-JIM Builds Them

Turning raw AI output into a professional asset is where the actual engineering happens. You need a structured pipeline, which takes raw generations as “digital clay” and refines it into a production-ready geometry through five essential stages. The same mesh problems show up in device-based photogrammetry. Our 3D reconstruction on iOS guide covers the ObjectCapture workflow, which hits the same mesh density and topology walls described here.

|

|

The process begins with the first stage: Fine-tuning Generation. Since 3D models (TRELLIS, Hunyuan) are more temperamental than 2D tools like Stable Diffusion, you often need to adapt open-source models to your specific category or camera angles. This requires significant data and compute, as standard training scripts rarely run optimally (or correctly, in the case of Hunyuan) without heavy modification. In case anything goes wrong, as it often does with open-source code, pay attention to issues in the source repo. Even then, fine-tuning has limits and cannot fix every edge case. But it is almost always worth the effort as each step down the line depends on the quality of the initial generation.

The next stage is Segmentation, where you split a single, unmanageable mesh into components. Open-sourced models like P3-SAM, SAMesh, or PartField serve as the backbone here. Instead of fighting one massive blob, segmenting allows you to run specialized logic on different parts: fixing an important part with high fidelity while keeping the base as-is, or replacing it with some preset. This stage is the foundation for organized editing and material management, but it often takes more resources than the generation itself.

Because standard splitting often leaves “holes” where segments connect, Completion becomes the next vital stage, powered by models like X-part or HoloPart (both are open-source). This phase uses the initial mesh to “hallucinate” missing geometry, ensuring every segmented part is a separate, manifold object. This makes the assets physically viable rather than just visual shells, but comes at the cost of high memory requirements and additional time.

Texture Assignment follows as the next stage in the pipeline: AI textures made for the whole mesh can barely ever satisfy production. By having distinct geometry for different components, you can assign PBR materials from a high-quality database or run painting models on individual parts, ensuring that metal looks like metal and cloth looks like cloth.

Finally, the Cleanup stage addresses the chaotic topology. By using sequence-based tools like MeshAnything v2 that place vertices similar to a human artist, or applying remeshing algorithms to redistribute polygons, you can turn dense, uneditable topology into clean, even surfaces. It is far more reliable to retopologize these simple, segmented pieces than to attempt to fix a complex, branching mesh all at once.

The Current State of AI 3D Generation: Strengths, Limits, and What to Expect Next

Tracing the evolution of 3D AI from basic photogrammetry to sophisticated “Native 3D” models makes one thing clear: the technology has not yet achieved “out-of-the-box” usability for professional workflows. The gap between a chaotic AI hallucination and a production-ready asset cannot be bridged by simply waiting for a better model; it requires fine-tuning and the implementation of a robust post-generation workflow. This pipeline might involve segmenting the mesh into essential components, replacing parts with high-quality presets, or retopologizing for clean geometry. It may also require thickening thin parts to meet 3D printing requirements, assigning realistic PBR textures for a final render, or many other steps, depending on the specific use case.

While current technology excels at generating visual prototypes suitable for advertising and e-commerce, it still falls short of the rigorous topological and physical standards required for high-end manufacturing or gaming. In conclusion, we are not waiting for a model that does everything, but rather building the workflows that yield results here and now. As AI quality improves, it will open doors to previously unreachable industries. This progress creates a paradox: the better the models get, the more crucial the adaptation layer becomes to move from a simple visual prototype to a complex, physics-ready asset. If you are evaluating whether to build this kind of pipeline in-house or with a partner, our computer vision development services page covers how we work.

AI 3D Generation for Games, Jewelry, Fashion, and Advertising

The state of 3D AI plays out very differently depending on what you need to do with the output. In some industries the current models are good enough to ship; in others, a custom post-processing pipeline is the only thing that separates a prototype from a product.

Game studios

Game studios spend real money on environmental assets, props, and background characters that players rarely look at directly. AI 3D generation can already automate much of that work: rigid objects, furniture, vehicles. That frees artists for the characters and hero items that require real craft. The catch is topology: game engines want clean, low-poly meshes with proper UV maps, and raw AI output is neither. A post-generation pipeline that covers segmentation, retopology, and LOD generation turns that raw geometry into something a game engine can actually use. IT-JIM builds that kind of pipeline for studios that need to grow asset output without growing the team.

Jewelry and accessories retail

Jewelry e-commerce is a strong fit. Brands that add 3D and AR product views see higher conversion rates, and rings, pendants, and watches are exactly the rigid, hard-surface objects current models handle best. The problem is precision: gemstones and metallic settings require accurate PBR material separation that out-of-the-box models consistently get wrong. A pipeline fine-tuned on a brand’s own SKU catalog, paired with a curated material database for metals and stones, can produce render-ready assets at scale. The ring example from earlier in this article shows exactly what the starting point looks like. A custom pipeline is what closes that gap.

Fashion and apparel

Fashion is split. Anything that drapes or stretches still defeats current generation models. Rigid accessories (bags, shoes, eyewear) are a different story. A well-designed pipeline can triage SKUs by category: send accessories through the automated route and send garments to a 3D artist. That split alone cuts the cost per digital asset significantly.

Product visualization and advertising

Campaign renders only need to look right in a still image or short video. Nobody inspects the wireframe. AI-generated geometry, even with dense topology, is perfectly usable for offline rendering. A pipeline that goes from product photography to render-ready 3D asset is a practical, lower-cost alternative to commissioning a 3D modeler for each SKU.

Building production-ready 3D pipelines

The post-generation workflow in this article is real engineering work. Getting from a raw AI mesh to something production-ready requires expertise across computer vision, 3D geometry processing, and model fine-tuning. Clean topology, proper materials, and the right output format for your renderer or game engine do not come automatically. IT-JIM builds that pipeline for clients who need working output, not proof-of-concept demos.

We have fine-tuned open-source models like TRELLIS and Hunyuan on domain-specific data, built segmentation tools, and written retopology and material assignment steps that turn a dense AI mesh into something a game engine or e-commerce renderer can actually use. Several of those projects are in our portfolio.

If you are scoping out an AI 3D generation project in product visualization, game asset production, jewelry e-commerce, or another area and need a team that has already solved the post-generation side, get in touch.