Automatic Floor Segmentation Using Computer Vision

Automatic floor segmentation can serve many interesting purposes including mixed reality (MR) applications, interior design, entertainment, computation of available space in a room, or indoor robot navigation. In this project, we have been solving a problem of scene understanding and, in particular, determining which pixels of the image belong to the floor.

The problem of floor segmentation is a good example of how the same task can be solved with classical computer vision algorithms or deep learning. As it often happens, the combination of these methods gives the best result.

Floor Segmentation Using Classical Pipeline

We start our experiments with superpixels as they are one of the most widely adopted techniques for indoor image segmentation. We use the simple linear iterative clustering (SLIC) that works by clustering pixels based on their color similarity and proximity in the image plane.

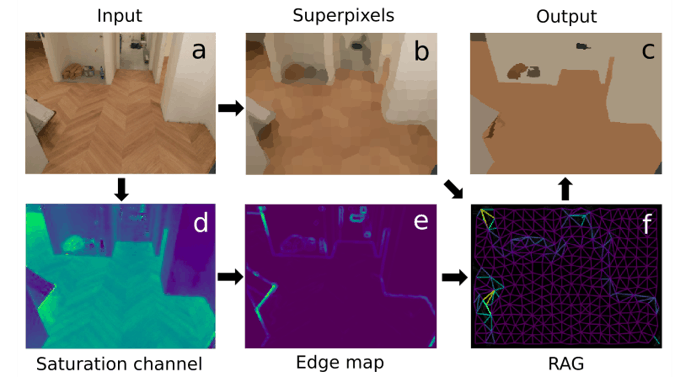

Since the straightforward application of superpixels does not provide a perfectly segmented floor, we make a more complex pipeline for image processing. Its steps are illustrated in the figure below and include:

- transforming the RGB input image (a) into HSV color space

- extraction of SLIC superpixels (b)

- obtaining an edge map (e) from the S-channel image (d)

- constructing a region of adjacency graph (RAG) (f) from the combination of the superpixels image and the edge map.

- hierarchical merging of the RAG and final image clusterization (c)

The main steps of the classical pipeline.

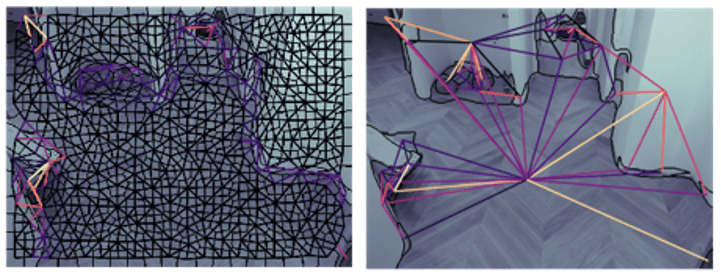

The most important step in the classical pipeline is an agglomerative hierarchical merging of the RAG. We analyze edge map intensity between each pair of neighboring superpixels and join those with edge intensity below a certain threshold. We do it iteratively starting from the weakest edges and end up with a few homogeneous regions separated by strong edges. In the figure below you can see the RAG before and after hierarchical merging. All nodes with an edge intensity less than a threshold are merged together. The border of regions is shown in black.

The RAG before (left) and after (right) hierarchical merging.

Since the classical approach is very sensitive to parameter tuning, we have run the classical pipeline several times with different model parameters, resulting in many binary segmentation masks. These masks are joined into a single one by per-pixel majority voting and additional thresholding for balancing precision and recall for a floor class.

Floor Segmentation Using Deep Learning Pipeline

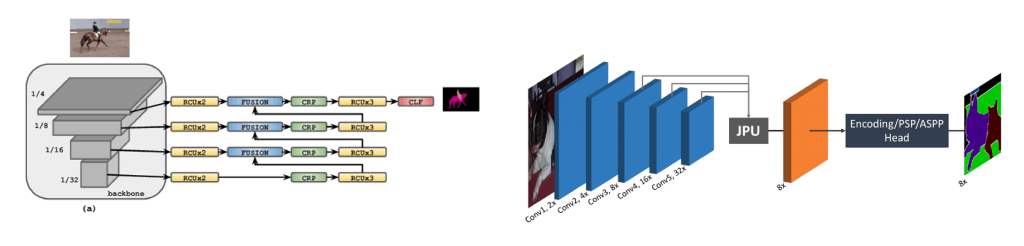

The DL solution is based on two CNNs: light-weight RefineNet and FastFCN with a joint pyramid upsampling (JPU) module and modified output layers to predict only 2 classes, a floor and not a floor.

The CNNs architectures used in the paper

For CNN training, we experimented with a few train sets: 1449 images from NYUDv2; 10329 images from the SUN-RGB-D and 8880 images from the SUN-RGB-D with NYUD removed. The target test dataset was a set of 21 hand-labeled images acquired for evaluation purposes.

Fusion of the Approaches

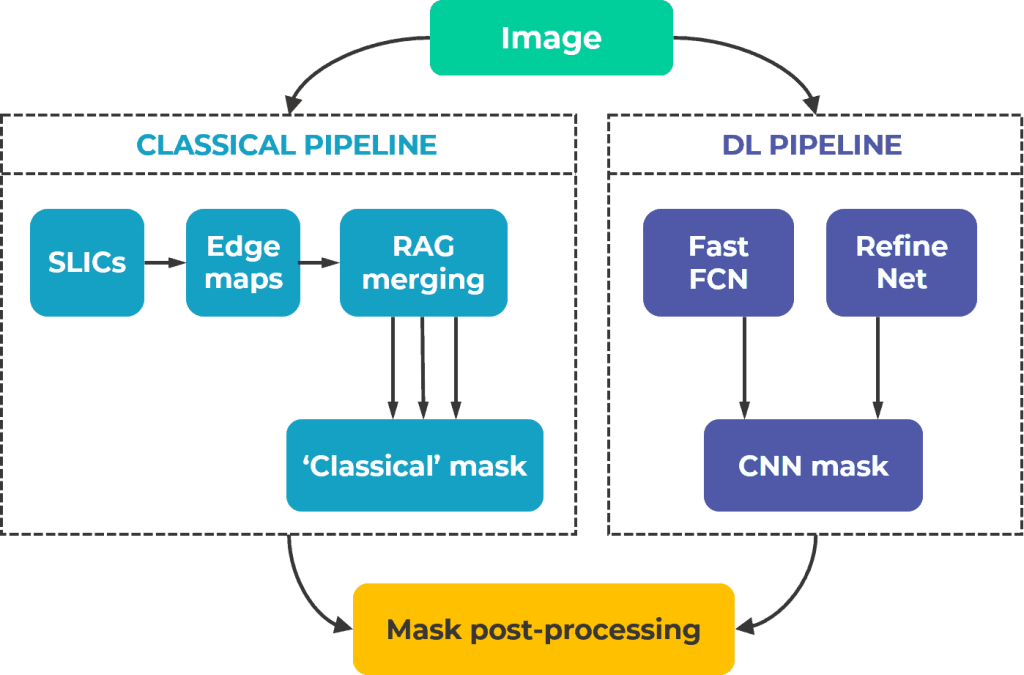

To additionally refine the quality of segmentation maps, we build a fusion scheme:

Scheme of classical and DL pipeline fusion.

The binary output mask from the classical branch is combined with the sum of segmentation masks predicted by CNNs, followed by post-processing using texture analysis.

Post-Processing: Texture Feature Analysis and Edge Refinement

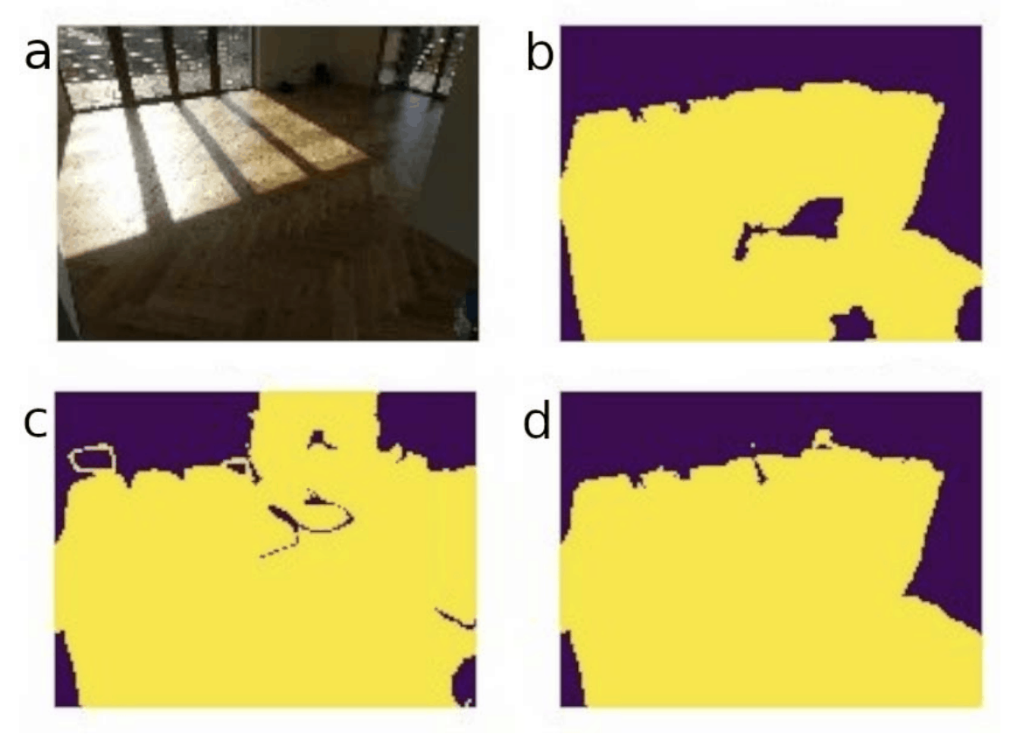

The main purpose of this stage is the final classification of uncertain areas or blobs that result from masks having opposite labels after their summation. Feature analysis resolves these uncertainties and makes a more accurate prediction. In the image below one can see an example with the input image (a), the classical pipeline output (b) the deep learning pipeline output (c) and the resulting mask after post-processing (d).

Post-processing based on the texture feature analysis.

For texture features extraction we use a gray-level co-occurrence matrix (GLCM). It determines how often different pairs of pixels appear within a selected region (blob).

Comparison of Floor Segmentation Results

To evaluate the results of segmentation we use Intersection over Union (IoU). All intermediate IoU values are shown in the table below.

| Mask obtained with: | IoU |

| Classical branch | 0.5442 |

| RefineNet | 0.7837 |

| FastFCN | 0.7893 |

| Deep learning branch | 0.7939 |

| Classical + deep learning branches | 0.7977 |

| Full pipeline | 0.8013 |

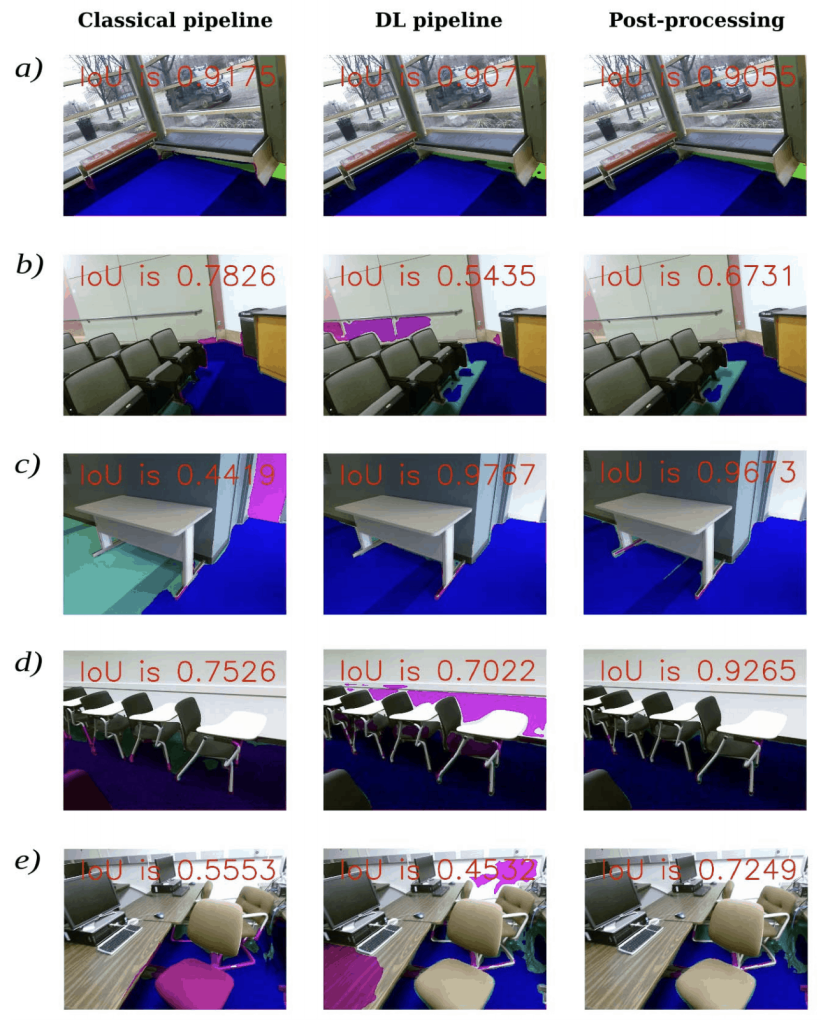

In the following figure, you can find the examples of segmentation masks obtained with the classical pipeline, deep learning pipeline, and as a result of their combination and post-processing.

Color legend: dark blue is a true positive, magenta is a false positive, cyan is a false negative.

The deep learning solution handles more challenging cases better than the classical computer vision pipeline. However, for some images, the developed image analysis procedure provides quite competitive results or even outperforms the CNN-based solution. The best result is achieved by merging 3 masks (two from the neural networks and one summed mask from the classical pipeline) and applying the post-processing based on texture feature analysis.

Summary

We have examined the problem of automatic floor segmentation. Despite tremendous progress in CNNs, classical CV still does a great job in pre-processing and post-processing stages as well as covers some specific classes where the pre-trained DL model might fail.