GStreamer for Computer Vision and Audio Processing

You might have heard of something called “GStreamer”. I know what you think. This is some old and boring geek-and-nerd stuff from Linux, right? But what is it? What is the use of GStreamer? If we want computer vision or audio (speech, music) processing, can GStreamer help us?

In this article, I’ll try to answer these questions. This article is beginner-level and assumes no or little previous experience with GStreamer. But I assume that you are interested in computer vision and/or audio processing and know at least a little bit of C++ (for this GStreamer tutorial).

What Is the GStreamer Library?

So, what is GStreamer? The official documentation calls it an “open-source multimedia framework” and gives the following definition:

GStreamer is a library for constructing graphs of media-handling components. The applications it supports range from simple Ogg/Vorbis playback, audio/video streaming to complex audio (mixing) and video (non-linear editing) processing.

Applications can take advantage of advances in codec and filter technology transparently. Developers can add new codecs and filters by writing a simple plugin with a clean, generic interface.

Wikipedia gives the following definition:

GStreamer is a pipeline-based multimedia framework that links together a wide variety of media processing systems to complete complex workflows. For instance, GStreamer can be used to build a system that reads files in one format, processes them, and exports them in another. The formats and processes can be changed in a plug-and-play fashion.

GStreamer supports a wide variety of media-handling components, including simple audio playback, audio and video playback, recording, streaming and editing. The pipeline design serves as a base to create many types of multimedia applications such as video editors, transcoders, streaming media broadcasters and media players.

GStreamer is over 20 years old, and might not be the current “hot topic”. However, as we will see below, it’s very important for computer vision, especially at the “professional” and “deployment” levels, when you progress beyond toy demos and suddenly start to discover that the “real world is not that simple”.

GStreamer is a part of the GNOME project (like in the “GNOME desktop”), and while I (as an experienced Linux user) personally strongly prefer the KDE desktop to GNOME desktop, GNOME libraries are very nice. Note that GStreamer is also used by the Qt GUI library and thus KDE desktop.

Some people would think that the word “stream” in GStreamer means network streaming. This is not so. Its primary function is to build local pipelines. However, GStreamer does have plugins for network streaming protocols like RTSP and it is frequently used for designing RTSP server or client applications.

Languages and Platforms

GStreamer is most commonly found on Linux. However, it’s a cross-platform C library available on all major platforms (Windows, MacOS, Android, iOS, etc.). Note that “Linux” includes “web back end”, “embedded” and “single board” (Raspberry Pi and friends) among other things. The only platform I couldn’t find a pre-built GStreamer for is Web Browser (WASM). Not that it’s impossible in theory, but probably nobody wanted such a heavyweight monster on a very restrictive WASM platform. GStreamer is a huge framework, it has tons of dependencies, and you should never try to build it from the source unless you have no choice.

GStreamer’s native language is C (not C++). It can be directly called from C++ or Objective C. For other languages (Python, Java, etc.), you have a choice of either adding some C++ to your code via language interfaces (such as pybind11 or JNI) or using a GStreamer wrapper for your language. They generally exist but might be out of date and not support the latest GStreamer versions.

A C++ wrapper, gstreamermm, with nice C++ classes, used to exist, but unfortunately, it is not supported anymore.

Python GStreamer wrappers are popular, but again, compared to C/C++, they tend to use outdated versions (sometimes even 0.1 instead of 1.0).

To get the most out of GStreamer, and to understand it fully, you should use it in C or C++ (and not Python or other languages). This is what we will do in this C++ tutorial.

What Is the Use of GStreamer?

GStreamer has many uses, but we are interested in computer vision and audio processing, right? How can GStreamer help us?

Imagine the following situation. You are writing an application that processes a video or audio. You need some library that would encode and decode audio and video in various codecs and formats so that you can process raw video or audio in your code. Or maybe you want something even funnier, like integrating your algorithms with RSTP streaming, web back end, or building sophisticated real-time pipelines. Can we do that?

Wait, weren’t there C libraries for different codecs, like libx264 or libaac? Yes, but there are dozens of codecs and containers, each with its own library, with its own unique API, often clunky and unlike all other APIs. Unfortunately, the end users tend not to care about this fact, they expect your application to work with any audio or video format that exists and will be really surprised and frustrated if it doesn’t. Do you really want to code the low-level logic of decoding various file formats with about 20 various libraries like libx264? Probably not. What we want is “one library to rule them all”. We want a C/C++ library that would work with a large number of audio and video formats and codes. This is harder than people often think.

Before giving you the answer, let’s mention a few options that do NOT work:

OpenCV is not a good choice, see the section on GStreamer and OpenCV below.

Beginners often tend to avoid this issue by preprocessing the input data. For example, you can open your input video file in a video editor (or, for nerds, with ffmpeg in terminal), extract the audio track as an uncompressed WAV, and then read your video with OpenCV, and your audio with libsndfile. Is it possible? Yes, and it can be sometimes justified for early R&D work. Is it a good idea? Definitely not if you want a finished product or a nice demo.

Often people try to use FFMpeg (or GStreamer) in terminal, shell scripts, python system() function, or pipes like ffmpeg <some options? | python3 mycode.py, but this is really not much different from the previous option.

Now, the options that DO work.

GStreamer vs FFmpeg

Some operating systems (like Android and Windows) have their own OS-specific codecs API, often somewhat limited in formats supported, but in the worlds of Linux and cross platform there are basically only two good choices: FFmpeg and GStreamer. And “cross platform” means you can port your software everywhere (nice !), while, once again, “Linux” means “back end+embedded” (plus I just love Linux and use it for work). Nowadays, with things like AWS and Azure and Docker, Linux finally really moved from the geek-land into the mainstream.

So, FFmpeg and GStreamer, but which of the two is better? Both libraries will do the job. Both libraries are “umbrellas” over multiple low-level libraries like libx264. Both libraries support (at least in theory) various hardware video accelerators and hardware-oriented specs like Video4Linux 2 (used for e.g. camera feed). And GStreamer is not independent of FFmpeg, in fact, it uses FFMpeg for some codecs (“av” prefix in GStreamer element names like avdec_h264 means FFmpeg).

The two libraries have, however, rather different philosophies. FFmpeg has only low-level encoding-decoding operations, while GStreamer allows you to design and play sophisticated media pipelines. Both are very nice, definitely try FFmpeg (C API) if you haven’t already, but this article is about GStreamer. How do I choose between the two? If you only want encoding-decoding and you are prepared to micromanage the whole pipeline (no easy task!), choose FFmpeg. If you want a pipeline-building library, definitely choose GStreamer. Also, GStreamer has many nice extras, from RTSP streaming to video special effects.

To summarize, there are the main reasons to use GStreamer in your computer vision or audio processing code:

- Encoding and decoding a great number of audio and video formats (practically all that exist)

- Building sophisticated media pipelines

- Using GStreamer extras (network streaming, filters, media playback, etc.)

- Using GStreamer-based third-party frameworks like Nvidia DeepStream or GstInference

Interlude: on Codes and Containers

Audio and video tracks found in media files and streams are typically highly compressed using codecs, such as H265, VC9, or AC3. Encoded data is created from the raw data using encoders, and converted back to raw with decoders.

However, what if we want to put several media tracks into a single file? For example, one video track, several audio tracks (in different languages), and subtitles. Then you will need containers (or formats) such as AVI, QuickTime or MKV. Containers are created by muxers (which join media tracks), while the reverse operation of unpacking a container into separate tracks is performed by demuxers. Most modern media file formats (except for a few simplest ones: WAV, MP3) are containers.

Please do not mix up codecs with containers, they are two different things! For example, OGG is a container, while Vorbis is the codec most often used in OGG. An AVI container can contain a video in H264 or H265, and an audio in AC3 or AAC, or many other codecs.

How Do I Learn GStreamer?

Start with the official documentation. Seriously. It has a tutorial and a manual. There is nothing better. However, it only briefly touches on the topic which is of utmost importance to us: appsrc and appsink elements, or “Short-cutting the pipeline”. You can find numerous examples with appsrc and appsink on GitHub, but I didn’t find any good introductory tutorial on this topic, vital for audio and vision. Thus I wrote my own GStreamer tutorial in C++, and I will briefly cover it in the last section of this article. It also includes various appsrc and appsink examples, including “GStreamer+OpenCV” examples, showing how to use GStreamer and OpenCV in the same code.

GStreamer Pipeline Tutorial

How Does GStreamer Work?

It is covered pretty well in the official tutorial, so I’ll give only a very brief introduction. The basic GStreamer object is a pipeline (Fig. 1).

Fig. 1. GStreamer pipeline, from the official tutorial

It is built from elements (large boxes in Fig. 1), the GStreamer LEGO blocks, which have input-output ports called pads (small blue boxes). The pads can be linked together. The pipeline has a state (PLAY, PAUSE, READY, NULL, VOID_PENDING). When the pipeline is playing, it does so automatically, in multiple threads created by the GStreamer library.

When you try to link two pads, they negotiate, i.e. try to agree on a common data format, fixing all the little details like frame size, fps, etc. If they fail, the pipeline gives an error. Negotiation in GStreamer is based on capabilities or caps, for example (Note: they are NOT MIME types !):

video/x-raw,format=BGR,width=720,height=576

or

audio/x-raw,format=S16LE,layout=interleaved

for RAW (unencoded) video or audio data respectively. If negotiation fails, you can often fix it by inserting intermediate elements such as videoconvert, audioconvert and audioresample.

GStreamer in Terminal

While for “serious” GStreamer usage you need C or C++, nothing stops you from trying it out using GStreamer console tools. It will help you understand GStreamer basics and learn pipeline syntax and common elements. If you are reading this, we strongly encourage you to install GStreamer on your computer, download a few small audio and video file samples, and try out examples from this chapter. It’s good fun! Once again, the official documentation covers the “console GStreamer” rather well, so I will briefly show a few examples of my own that I find illustrative. The main tools are:

- gst-launch-1.0 : Create and launch a GStreamer pipeline, our main tool

- gst-play-1.0 : Play a media file (a minimal video player)

- gst-inspect-1.0 : Inspect available GStreamer plugins

- gst-discoverer-1.0 : Examine a media file, print information on codecs, etc.

Without further ado, let’s buy popcorn and start playing with GStreamer. gst-launch-1.0 receives a single argument: a text string describing the GStreamer pipeline. The syntax is simple: a number of GStreamer elements with optional options (pun intended). The neighboring elements are separated with either exclamation sign ‘!’ when they are linked, or space ‘ ‘, when they are not.

The simplest pipeline uses playbin, a high-level media playback element:

gst-launch-1.0 playbin uri=file:///home/seymour/Videos/suteki.mp4

It needs a URI (network URL or a full path to a file).

Elements audiotestsrc and videotestsrc create simple test videos. Elements autoaudiosink and autovideosink play the video (screen window) and audio (speakers) respectively on your computer. On some platforms they could be restricted in caps they accept, so it’s always a good idea to put conversion elements in the middle:

gst-launch-1.0 audiotestsrc ! audioconvert ! audioresample ! autoaudiosink

gst-launch-1.0 videotestsrc ! videoconvert ! autovideosink

gst-launch-1.0 videotestsrc pattern=18 ! videoconvert ! autovideosink

Conversion elements can do simple format conversions like YuV to RGB video, or int16 to float32 audio, for raw audio or video only (NOT codecs). audioresample can resample the audio to a new sampling rate (e.g. from 16000 to 44100 Hz).

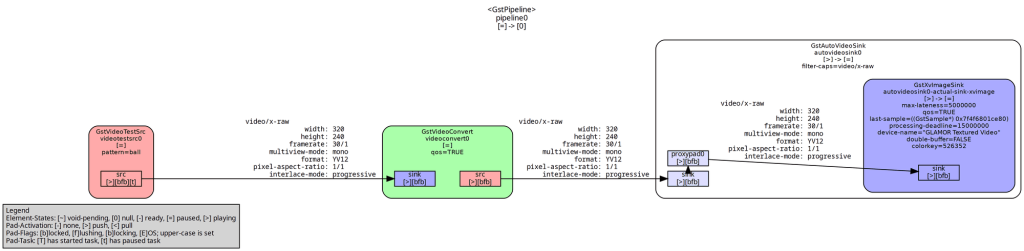

The GStreamer pipeline can be visualized using GraphViz software. Type in the console:

GST_DEBUG_DUMP_DOT_DIR=. gst-launch-1.0 videotestsrc pattern=18 ! videoconvert ! autovideosink

It will create a number of .dot files in the current directory (‘.’). Choose the one named “….PAUSED_PLAYING.dot”. The result is shown in Fig. 2 (such figures tend to be cluttered with details).

Fig. 2. A GStreamer pipeline visualized by GraphViz.

Branched Pipelines

You can create branched pipelines in GStreamer. The first type of branching happens when you duplicate a data stream with the tee element:

gst-launch-1.0 videotestsrc ! videoconvert ! tee name=t ! queue ! autovideosink t. ! queue ! autovideosink

It creates two windows with identical videos. Note how we name the tee element as t (any name could be used instead of t, e.g. cyberdemon), and then put space and not ! after autovideosink (no linking), then start another branch with t. (go back to the element named t, and try to link its other still unlinked pad). This pipeline is shown in Fig. 3.

Fig. 3. A branched pipeline visualized by GraphViz.

Another type of branching happens if an element has two or more source (output) pads with different media tracks. For example, let’s take high level decoding elements decodebin and uridecodebin. They behave similarly, except that decodebin receives data from a sink (input) pad, while uridecodebin receives data from a URI. So the two lines are very similar

uridecodebin uri=<file name>

and

filesrc location=<file name> ! decodebin

except the first one requires a full path. Let’s try to play a media file with uridecodebin:

gst-launch-1.0 uridecodebin uri=file:///home/seymour/Videos/suteki.mp4 name=u ! audioconvert ! audioresample ! autoaudiosink u. ! videoconvert ! autovideosink

Once again, you have two branches after uridecodebin:

uridecodebin ! audioconvert ! audioresample ! autoaudiosink

! videoconvert ! autovideosink !

This pipeline behaves similarly to playbin. uridecodebin is a high-level element, which automatically creates a sub-pipeline with appropriate demuxer and decoders.

Can we go to a really low level? Yes, but there is usually no need to. We can inspect our file with gst-discoverer-1.0 or ffplay. If we know that suteki.mp4 is a QuickTime file with AAC audio and H264 video, we can then play it with:

gst-launch-1.0 filesrc location=suteki.mp4 ! qtdemux name=d ! avdec_h264 ! queue ! videoconvert ! autovideosink d. ! avdec_aac ! queue ! audioconvert ! audioresample ! autoaudiosink

Here the two branches are:

filesrc ! qtdemux ! avdec_h264 ! queue ! videoconvert ! autovideosink

! avdec_aac ! queue ! audioconvert ! audioresample ! autoaudiosink

We see demuxer and decoders, and a new element queue. Note that the queue in GStreamer is called queue, while the word “buffer” means something completely different (I’ll get back to it eventually). It’s always a good idea to use queue in branched pipelines to avoid a possible deadlock, when synchronizing tracks on playback or especially muxing, as GStreamer does not check for deadlocks.

Now let’s try encoding. Now you have no choice but to go to the low level: encoders+muxer, sometimes also parser.

Video:

gst-launch-1.0 videotestsrc ! videoconvert ! x264enc ! avimux ! filesink location=out.avi

gst-launch-1.0 videotestsrc ! videoconvert ! x265enc ! h265parse ! matroskamux ! filesink

location=out.mkv

gst-launch-1.0 videotestsrc ! videoconvert ! vp9enc ! webmmux ! filesink location=out.webm

Audio:

gst-launch-1.0 audiotestsrc ! audioconvert ! wavenc ! filesink location=out.wav

gst-launch-1.0 audiotestsrc ! audioconvert ! lamemp3enc ! filesink location=out.mp3

gst-launch-1.0 audiotestsrc ! audioconvert ! vorbisenc ! oggmux ! filesink location=out.ogg

gst-launch-1.0 audiotestsrc ! audioconvert ! avenc_wmav2 ! asfmux ! filesink

location=out.wma

And now the hardest case. Let’s decode and re-encode:

gst-launch-1.0 filesrc location=zoryana.webm ! decodebin name=d ! queue ! audioconvert ! avenc_aac ! avimux name=m ! filesink location=out.avi d. ! queue ! videoconvert ! x264enc ! m.

What was that? A pipeline with splitting and merging branches!

filesrc ! decodebin ! queue ! audioconvert ! avenc_aac ! avimux ! filesink

! queue ! videoconvert ! x264enc !

Pipeline Tricks, GStreamer Real-Time vs. Offline Pipeline

There are a couple of extra tricks when designing pipelines. If there are multiple pads, the first suitable one is linked. This is not always desired. We can specify the pad name explicitly for linking, but only if we know the pad’s name, e.g. video_0 in demuxers:

gst-launch-1.0 filesrc location=zoryana.webm ! matroskademux name=d d.video_0 ! vp9dec ! videoconvert ! autovideosink

The second trick is the caps filter. If we write caps instead of an element between the two ! signs, then we force the negotiation process to only accept caps compatible with the specified caps. We can often use it to control elements that we cannot control directly, for example:

gst-launch-1.0 videotestsrc ! video/x-raw,format=BGR,width=1024,height=768 ! videoconvert ! autovideosink

Here the caps filter affects the negotiation between videotestsrc and videoconvert. videotestsrc cannot be programmed directly, but it is rather flexible at negotiations, and here we force it to produce 1024×768 BGR video. Similarly, It can be used to explicitly control the conversion elements, if we want to convert the media into a different sampling rate, frame size etc. Later we will work with appsrc and appsink. They can be configured with either direct caps (preferred) or a caps filter.

The final trick is the sync option, present in most sinks, including autovideosink and appsink. Try the following pipeline:

gst-launch-1.0 filesrc location=suteki.mp4 ! decodebin ! videoconvert ! autovideosink sync=true

This is the default. sync=true means that autovideosink plays the video stream at the 1x speed, provided that it has correct timestamps, actually in this case autovideosink sets the pace of the entire pipeline, as decodebin could decode the file much faster on modern computers. This is the GStreamer way of creating a real-time pipeline.

Now try to change to sync=false and see what happens (laughing smiley) !

If, on the other hand, we used a filesink, like in the re-encoding example above, it has sync=false by default. The pipeline plays as fast as it can (depending on processing speed), usually much faster than 1x. This is the offline file processing, GStreamer way.

The sync option is important for appsink, depending on our computer vision application, both choices make perfect sense.

In this section, I will cover a few topics which are neither “GStreamer in terminal” (previous section) nor “GStreamer in C/C++” (next section).

Does OpenCV Use GStreamer? A Tricky Relationship between the Two Libraries

Remember, I promised to explain why OpenCV, a popular computer vision library, is not good for reading and writing video files with VideoCapture and VideoWriter respectively. First, and this is the main reason, OpenCV cannot work with audio tracks at all. Second, it is rather inflexible, for example, try to encode into memory and not a disk file, you cannot! Third, depending on how OpenCV is built, it might have very limited codec support or none at all. There are no guarantees. For example, on modern Ubuntu, apt-installed OpenCV (for C++) is pretty good, while pip-installed Python OpenCV has very limited encoding capabilities.

How does OpenCV work with videos? It uses various backends, which at least for Linux usually means (surprise, surprise) either FFmpeg or GStreamer. And a couple of years ago Ubuntu switched from FFMpeg to GStreamer (1:0 for the latter !). If you are using OpenCV video I/O, you are actually using FFmpeg or GStreamer, why not cut the middle person?

There is another topic worth mentioning here, GStreamer in OpenCV. If (and only if) OpenCV was built with GStreamer, you can use GStreamer pipeline strings instead of file names in OpenCV VideoCapture and VideoWriter, terminated with appsink or appsrc respectively. It is discussed a lot in places like Stack Overflow, however, I don’t find the idea especially good. While it can slightly expand OpenCV powers with things like RTSP, you still cannot have audio or pipelines with multiple sources/sinks or anything complicated.

A much better way to combine GStreamer with OpenCV (in my opinion) is presented in the next section. With appsink and appsrc, you can move the raw pixels back and forth between your C++ code and GStreamer pipeline. Once the frame is in your C++ code, you can do anything you want with it. For example, wrap it with OpenCV’s cv::Mat, process it with OpenCV, and send the result back to GStreamer. Or, run a neural network inference or any computer vision code you want.

GStreamer and Deep Learning

Nowadays, “computer vision” and “audio processing” very often means “deep learning”. Owing to Deep Learning (DL) popularity, a number of plugins and frameworks have been proposed to run a neural network inference within the GStreamer pipeline.

- Nvidia Deepstream

https://developer.nvidia.com/deepstream-getting-startedThis is an Nvidia GPU-only Video Deep Learning framework based on GStreamer. Apart from neural network inference with TensorRT, it also supports Nvidia accelerated encoding+decoding, with an option to run the entire pipeline on the GPU. It is Linux-only and requires strict CUDA and CuDNN versions, better run it in Docker if you want to try. It also runs on Nvidia Jetson devices.

- GSTInference

https://nnstreamer.ai/

A GStreamer framework based on R2Inference, which supports inferences with a number of DL frameworks, such as TFLite.

- NNStreamer

https://github.com/RidgeRun/gst-inference

Another GStreamer DL framework, this one supposedly runs PyTorch models also.

- Intel DL Streamer aka Gst-Video-Analytics

https://github.com/dlstreamer/dlstreamer

Another framework, this time from Intel. It is friendly to Intel stuff like VPU and OPENVINO, and also OpenCV.

Note that you don’t have to use any of these frameworks to do neural network inference, you can always move data to your code with appsink and appsrc, and run the inference yourself in your own C++ code (or even in Python code for this matter), enjoying the total programmatic control over how you do the inference and visualization.

GStreamer vs Google MediaPipe

Here I compare GStreamer to another pipeline library, Google MediaPipe. I happened to play with both libraries in C++, and previously wrote a MediaPipe article (part1, part2, part3) in this blog. Let us now compare the two libraries (this is partly my subjective experience). At first glance, the two libraries are similar, as they are both multi-thread pipeline libraries. However, if you dig deeper, you will see numerous differences.

- Background: GStreamer is old and time-tested, part of GNOME. MediaPipe is relatively new, developed by Google.

- Main Goal: Rather different. MediaPipe is mostly about Deep Learning, while GStreamer is mainly about playback, streaming and re-encoding media.

- Deep Learning: MediaPipe can do Deep Learning with TensorFlow (Lite). It also has a number of pre-trained TensorFlow Lite-based “solutions”, and many people actually believe (completely mistakenly) that the “solutions” IS MediaPipe. GStreamer can only do DL with third-party frameworks.

- Traditional audio and video processing (resampling, reencoding, resizing, filtering): GStreamer does these things much better and has a vast array of standard elements.

- Languages: C with GObject for GStreamer, C++ for MediaPipe. Bindings for a few other languages are available, but you’ll need C++ to get the most out of both frameworks.

- Platforms: All common platforms for MediaPipe, except WASM for GStreamer. However, you’ll have to build MediaPipe from the source in order to use it in C++.

- Data: MediaPipe: handles arbitrary data, but two or three special classes are available for images and audio. GStreamer: Highly specialized for audio+video via the caps system.

- Data formats and negotiation: GStreamer: a sophisticated caps system and a wide variety of formats. MediaPipe: very few formats and negotiation is virtually non-existent.

- Codecs and containers: GStreamer: Pretty much all codecs and containers that exist are supported via plugins. MediaPipe: Limited support based on OpenCV + FFmpeg.

- Video with audio tracks: GStreamer: It’s easy to read a video file and split it into audio + video data within the same pipeline, same with writing files. MediaPipe: I am not sure if it’s possible at all with standard calculators, probably not. In other words, it does not qualify as “one library to rule them all”.

- Network streaming: GStreamer: has plugins for network streaming. MediaPipe: Does not (if I remember correctly).

- Pipeline definition: GStreamer: text string or C++ code. MediaPipe: ProtoBuf text string.

- Internal structure: MediaPipe is generally simpler and easier to understand and to micromanage and to write custom “calculators” (similar to GStreamer elements). For GStreamer, it is much harder to go “under the hood” and write custom elements. However, you can use apprsc and appsink, as explained in this article.

- Timestamps and synchronization and real-time vs offline: In my opinion, MediaPipe does these things in a clearer and simpler way (offline by default), while in GStreamer default behavior depends on the sink used.

- Queues: MediaPipe by default uses unlimited queues at each pipeline link. In GStreamer, you have to always add queue elements manually, with a few exceptions like apprsc and appsink. GStreamer is prone to deadlocks if you are not careful.

- Documentation and tutorials: Good for GStreamer, bad for MediaPipe. MediaPipe documentation touts the “solutions” and largely ignores the C++ API .

- Bazel factor: MediaPipe requires Bazel to build itself AND your project and it is pretty much incompatible with the “normal” C++ world of CMake and make and apt-installed libraries. This is very inconvenient, and seriously limits the possible uses of MediaPipe. In contrast, GStreamer is easy to install (with apt in Ubuntu) and perfectly friendly to CMake and make and other build systems.

All things considered, GStreamer is much easier to use in C++ projects due to the horrific “Bazel factor”. Otherwise, their goals and typical use cases are rather different.

Let’s Sum Up

So far, we have covered what GStreamer is, how it works, its use cases, and how to run it in the terminal. We have also explained the relationship between GStreamer and OpenCV, what options there are to run a neural network inference within the GStreamer pipeline and compared it with the Google MediaPipe library. Now, let’s do some coding – follow us to the GStreamer C++ tutorial!