AI Music Technology for Piano Transcription

AI Music Tech is Booming

AI music technology is becoming a critical layer in modern digital products. Music education platforms, creative software, rights management systems, and large-scale media services increasingly rely on accurate and fast transcription to unlock value from audio data.

In our work with AI music processing systems, we see that traditional transformer-based approaches, while accurate, are often expensive to operate and difficult to scale. This article outlines how Mamba, a selective state-space model architecture, enables high-performance, cost-efficient AI music technology suitable for real-time and production environments. Hands-on research and experimentation behind this work was done by Roman Bernikov, who focused on model architecture choices, evaluation methodology, and performance optimization.

AI Music Technology Use Cases and Business Impact

Efficient transcription underpins many real-world AI music technology products, including:

- Real-time music education and performance feedback

- Large-scale music catalog indexing and analytics

- Creative AI tools and digital audio workstations

- Edge and on-device AI music technology

- Enterprise-scale batch music processing

These are representative of the AI music processing challenges encountered in production audio AI services.

The Scalability Challenge in AI Music Technology

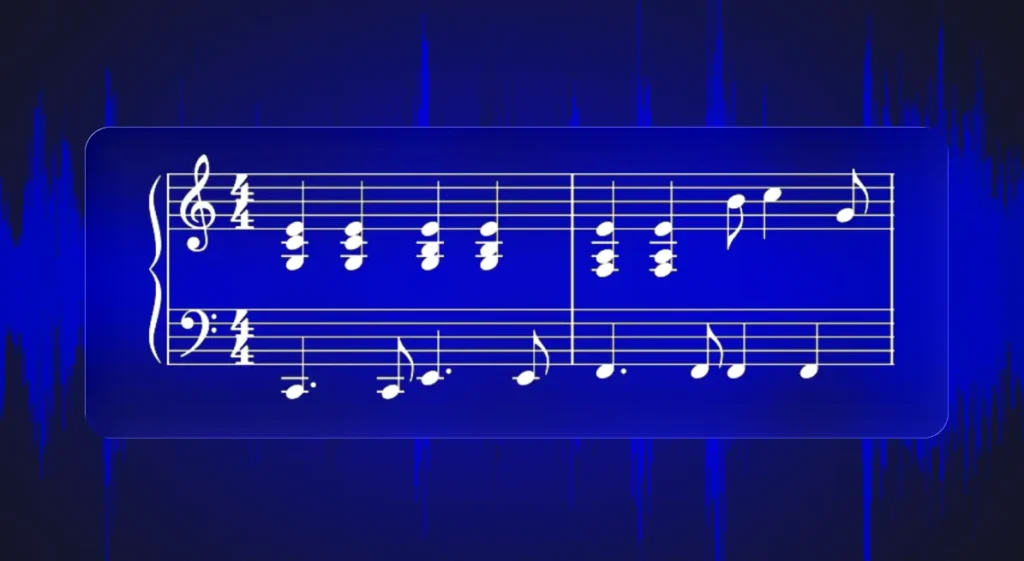

Automatic Music Transcription (AMT) converts raw audio into symbolic representations such as MIDI or piano rolls. Transformer-based models dominate benchmarks but scale poorly with sequence length, leading to increased latency and infrastructure cost.

In production audio AI services, this directly impacts feasibility for long recordings, real-time interaction, and cost-efficient deployment.

Why Mamba Is a Strong Fit for AI Music Technology

Mamba is a selective State Space Model (SSM) designed for linear-time sequence processing. Rather than relying on attention across all time steps, it maintains a learned internal state that evolves dynamically with incoming audio frames.

From a deployment perspective, this provides:

- Linear inference time

- Stable memory consumption

- Efficient GPU execution

These characteristics are especially valuable when building scalable audio AI services.

Designing a Production-Oriented AI Music Transcription System

The transcription system follows a design approach we commonly apply in client projects:

- Spectrogram-based feature extraction

- Lightweight convolutional preprocessing

- Efficient long-range sequence modeling using Mamba

- Task-specific prediction heads

While more complex variants were explored, a unidirectional Mamba architecture with skip connections delivered the most reliable balance between accuracy, simplicity, and inference efficiency, which are key requirements for production systems.

Accuracy Evaluation

System outputs were evaluated against ground truth MIDI annotations from the MAESTRO dataset. Metrics included onset timing, offset accuracy, frame-level alignment, and velocity estimation.

The model consistently captured musical structure and timing, with most errors occurring in ambiguous regions such as soft notes or dense passages. While absolute benchmark scores did not exceed heavily optimized transformer models, performance was competitive given the significantly simpler architecture.

For client-facing AI services, this level of consistency and predictability is often more valuable than marginal benchmark gains.

Efficiency Gains That Enable Scalable AI Music Technology

Inference benchmarks show that the Mamba-based system transcribed 30 minutes of audio in approximately 1.3 seconds, an order-of-magnitude improvement over transformer-based approaches and an impressive 1300 faster than realtime .

In production AI services, these gains translate directly into higher throughput, lower cloud costs, and the ability to support real-time user experiences.

Conclusion

Mamba-based architectures enable a shift toward deployable, efficient AI music technology. By combining competitive transcription quality with exceptional inference efficiency, this approach aligns well with the requirements of scalable audio AI services operating in real-world environments.

If you’re exploring how advanced architectures like Mamba can be applied beyond research, at It-Jim we help teams turn music AI ideas into real products. Our work spans music product prototyping and validation, music generation, music and video synchronisation, and custom plugin and tool creation. Learn more about our work on the Music Tech industry services page.

Have a project you’d like to discuss? Contact us below or reach out at hello@it-jim.com.