Video Game Review Summarization Using OpenAI GPT-3 Python API

Normally, computers only understand their own programming languages, like Python or C++. If you want to teach them human languages, like English, Ukrainian, or Japanese, it is possible, but it takes effort. The respective branch of science is called Natural Language Processing (NLP), which combines computer science (including machine learning and deep learning) with traditional linguistics.

In this article, we will walk you through a toy-level solution to a real and rather challenging NLP problem with a complete functional Python code. The article is generally beginner-level, but it requires basic knowledge of programming in Python. Minimal knowledge of machine learning and data science will not hurt either, but it is not strictly required. We are deliberately going to create a pipeline of several steps to demonstrate different techniques used in NLP, namely:

- Data pre-processing with the pandas library

- Performing zeros-shot NLP tasks with GPT-3 via OpenAI Python API

- Selecting relevant statements using traditional NLP (i.e., without neural networks)

- Using pre-trained modern neural networks, in our case Bert Extractive Summarizer (bert-extractive-summarizer), based on transformers and sentence-transformers.

Customer Review Summarization

Companies deal daily with tons of customer reviews. Processing them all by human readers is not very cost-efficient, and here NLP comes to the rescue. We want to write a code that analyzes video game reviews automatically. Given multiple (possibly hundreds or even thousands) reviews of a single video game, we would like our code to create a list of five good things about this video game and a list of five bad things. Like this:

Good:

1. Amazing story

2. Rich and balanced skill tree

3. …

4. …

5. …

Bad:

1. Seriously outdated graphics

2. Frequent crashes on Windows 11

3. …

4. …

5. …

For our experiments, we will use a popular Steam Reviews dataset from Kaggle, which contains thousands of user reviews from Steam collected in 2017 (no modern games).

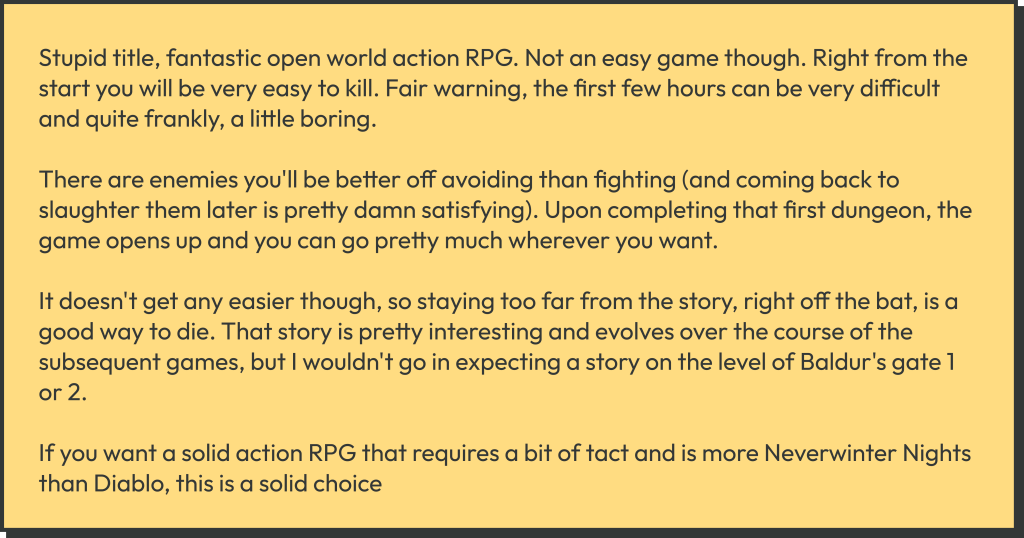

An example of a Steam Review

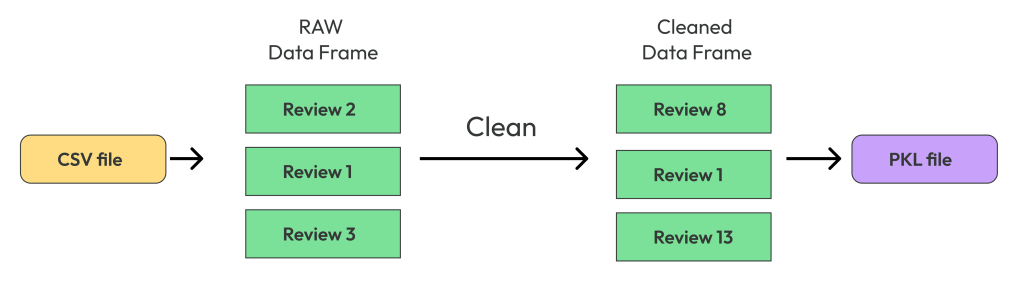

Chapter 1: Text Dataset Preprocessing with Pandas

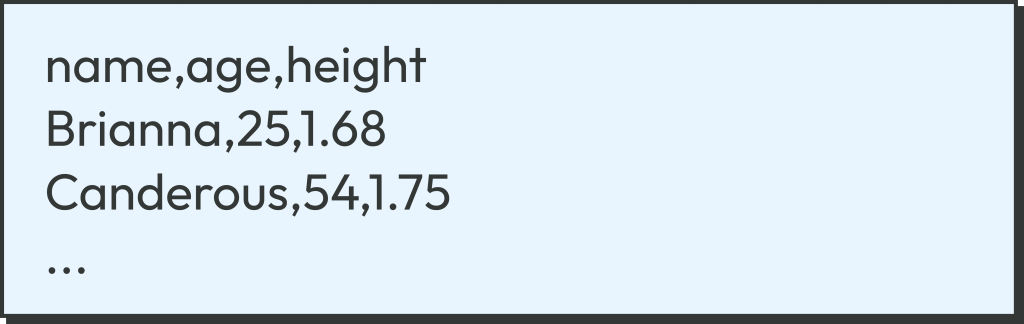

Let’s download the Steam Review dataset from this page. You will have to register in Kaggle to do it. Save the ZIP file somewhere on your hard drive and unzip it. You now have a whopping 2 Gb CSV file called dataset.csv. Let’s have a look at our dataset. CSV stands for “comma-separated values”. CSV files look like this:

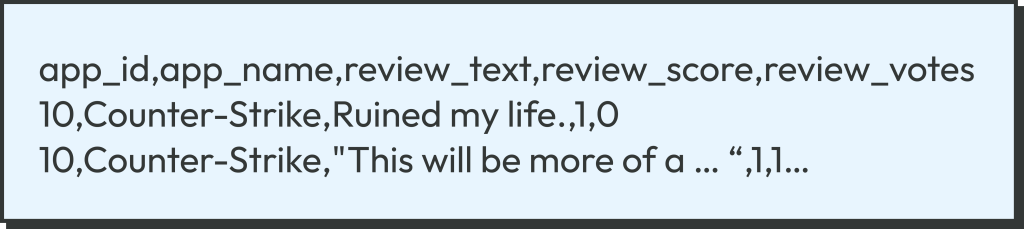

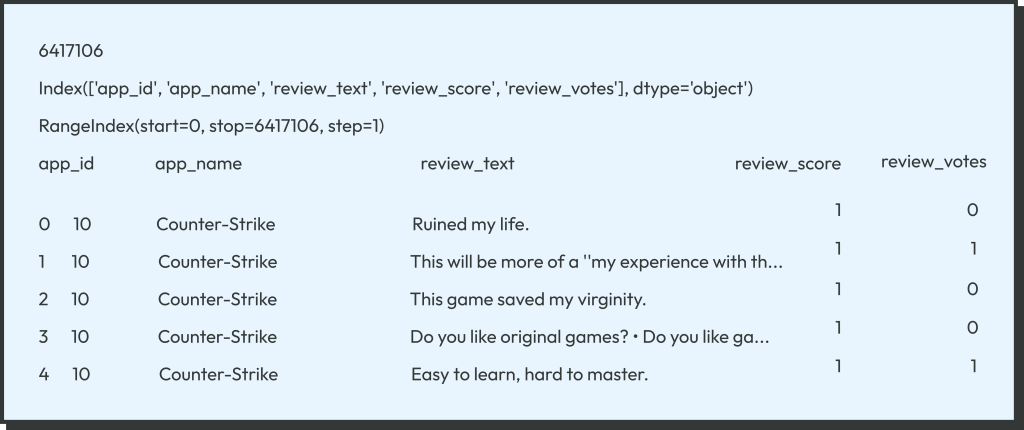

The first row contains column names, while the remaining rows contain the data, exactly one data record per line. The columns are separated by commas, hence the name of the format. If we look at the top of our monstrous CSV files, it looks like this:

There are five columns, but we are only interested in the first three. The dataset contains 6417106 (~6.4 million) reviews of 9972 (~10k) games. Note how the third column review_text can contain a long text. If the text contains commas, it has to be enclosed in double-quotes.

Why do we want to preprocess the dataset? There are several reasons.

- Algorithms, including neural networks, typically cannot work with raw data (like CSV), and the data has to be transformed.

- Data often has to be cleaned by removing the bad data.

In our case, we transform the data into the tabular DataFrame of the Pandas library, and clean it by removing too short reviews, like the “Ruined my life.” one-liner in the first row of the dataset. Let’s start coding. The first file, pipe1_clean_dset.py, corresponds to this chapter. Let us walk you through the code.

First, we read the CSV file into the Pandas DataFrame (table object).

df = pd.read_csv(DSET_PATH, keep_default_na=False)

You will have to replace DSET_PATH in the code with the actual path to the dataset.csv file on your hard drive. What is the flag keep_default_na? It assures that an empty cell (two commas in a row “,,”) will be interpreted as an empty string “” and not as a float NaN. This is important for us because some game IDs in our dataset have empty names.

Let’s look at our DataFrame (see Pandas documentation for more)

print(len(df)) print(df.columns) print(df.index) print(df.head())

We get the following output

Now let’s clean the dataset. We want to discard one-liners and keep only the more informative reviews, which are over 300 characters long. With Pandas this is easy:

rev_len = df['review_text'].apply(lambda s: len(s) if isinstance(s, str) else 0) df_clean = df[rev_len >= 300]

The very useful method apply() of pd.DataFrame applies a function to each cell of a column, generating pd.Series, a new column-like object. We then select rows with rev_len >= 300. The function is a python lambda function in our case. In fact, if the column contains only good strings and no garbage, you can simply use the python built-in len instead, like this:

rev_len = df['review_text'].apply(len)

After the cleaning, we have 1649359 ~ 1.6 million reviews remaining, about 25% of the original number. Finally, we save the cleaned dataset as the binary file temp/data_clean.pkl using the famous Python serializer Pickle. But first, we make sure that the subdirectory temp exists in the current directory:

pathlib.Path('temp').mkdir(exist_ok=True)

df_clean.to_pickle('temp/data_clean.pkl')

This is interesting. Usually, toy-level machine learning examples on the web do everything in a single Python (or, rather, Jupyter notebook) file, and data preprocessing (if any) is done on the fly. In contrast, in professional machine learning, the intermediate results are saved to disk as much as possible to save time. For example, the dataset can be preprocessed once and used for multiple training experiments. The intermediate data should be organized in a neat hierarchical directory structure, like the temp directory in our case (NEVER save multiple data files in your code directory). While our code is a toy example, we still wanted to show (in a toy version) some of the good practices of professional machine learning and data science.

By the way, does saving the cleaned dataset as the PKL file speed things up? Yes. Despite Pandas being a highly optimized library, parsing a 2 Gb CSV file alone takes some time (over 15 s on the laptop we’re using), while deserializing a PKL file is very fast. Note that here we assumed that 2 Gb worth of data will fit into your computer’s RAM, a reasonable assumption in 2023. But larger datasets will not fit into RAM, forcing us to process them incrementally, an interesting topic beyond the scope of this article.

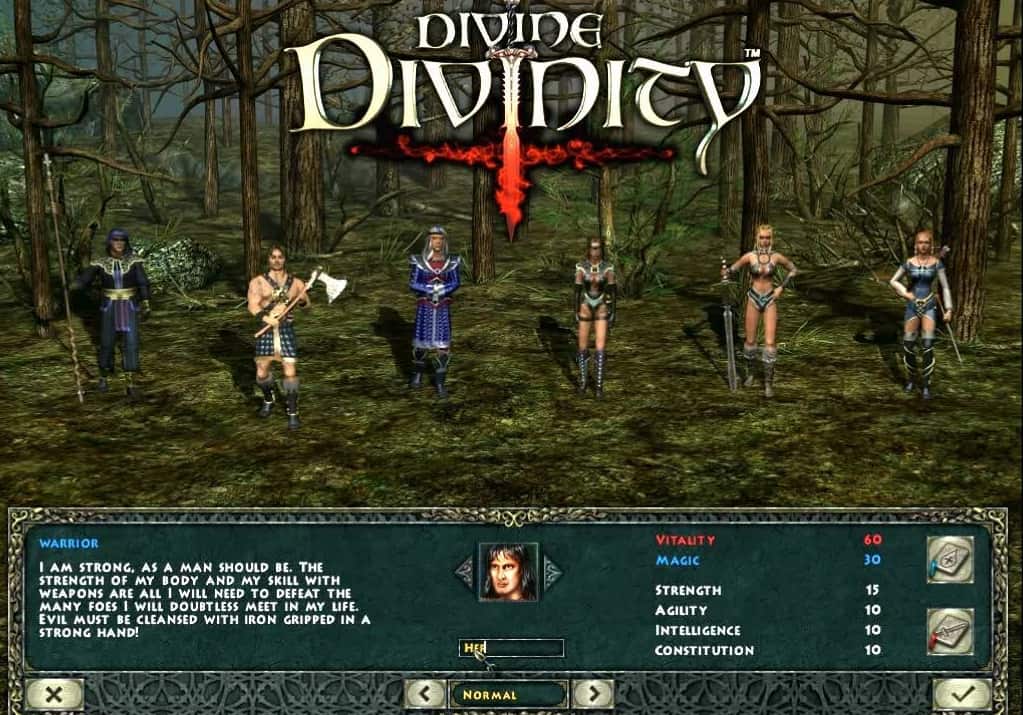

While our dataset contains almost 10k games, it would take a long time (and money, in the case of GPT-3) to process them all. So, let’s pick up one game and stick with it. We chose the game called Divine Divinity, which has ID=214170 (the choice of the game is the author’s and is not based on any objective criteria). Selecting reviews by the ID is very easy:

app_id = 214170 # Divine Divinity df_id = df_clean[df_clean['app_id'] == app_id]

Exercise: Use Pandas to find if your favorite video game is in the dataset and what its ID is.

Chapter 2: Summarizing Each Review with OpenAI GPT-3 Python API

Large Language Models and GPTs

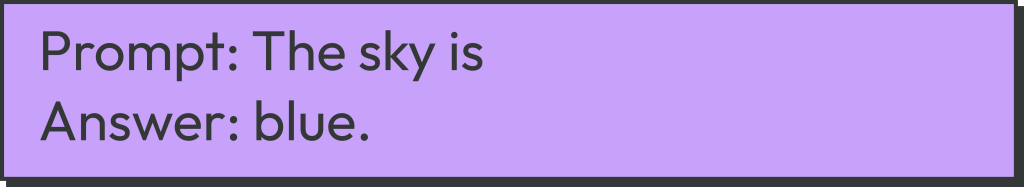

Large language models (LLMs) from OpenAI generated a lot of buzz in the media recently with the release of ChatGPT and later GPT-4. How do these models work? They are large neural networks (technically, transformer decoders) that are able to continue a given text, for example:

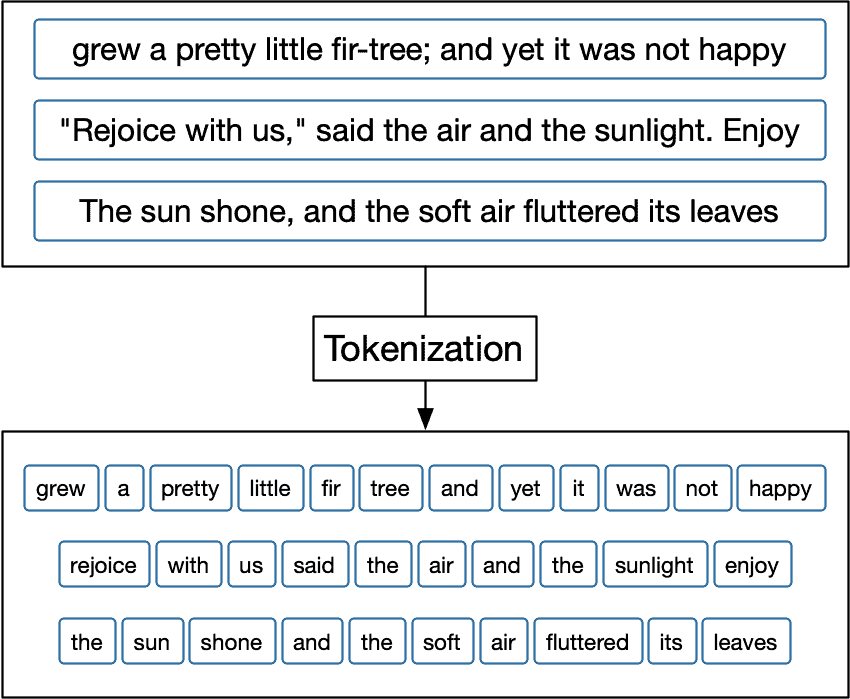

However, neural networks cannot process the text directly, so first, the text has to be tokenized, i.e., broken into separate words. The word “token” comes from transformer slang, and in the context of models like GPT or BERT, it basically means “word”.

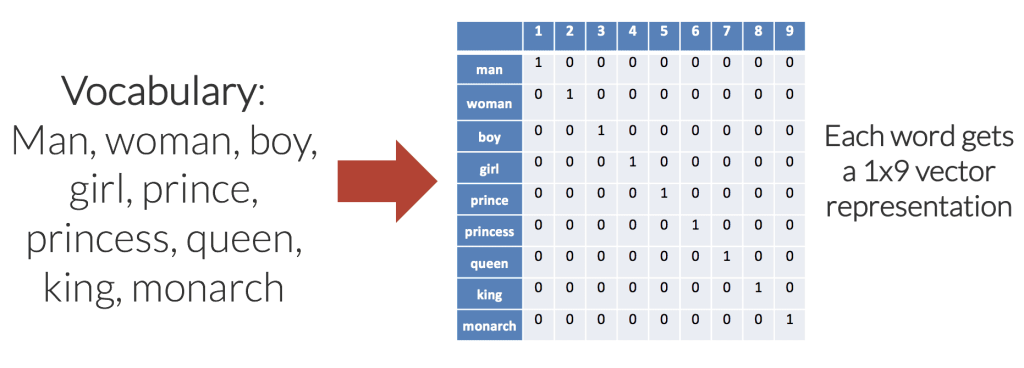

Tokenization is followed by embedding, where each word is replaced by either an integer number or a multi-dimensional floating-point vector from a pre-defined dictionary.

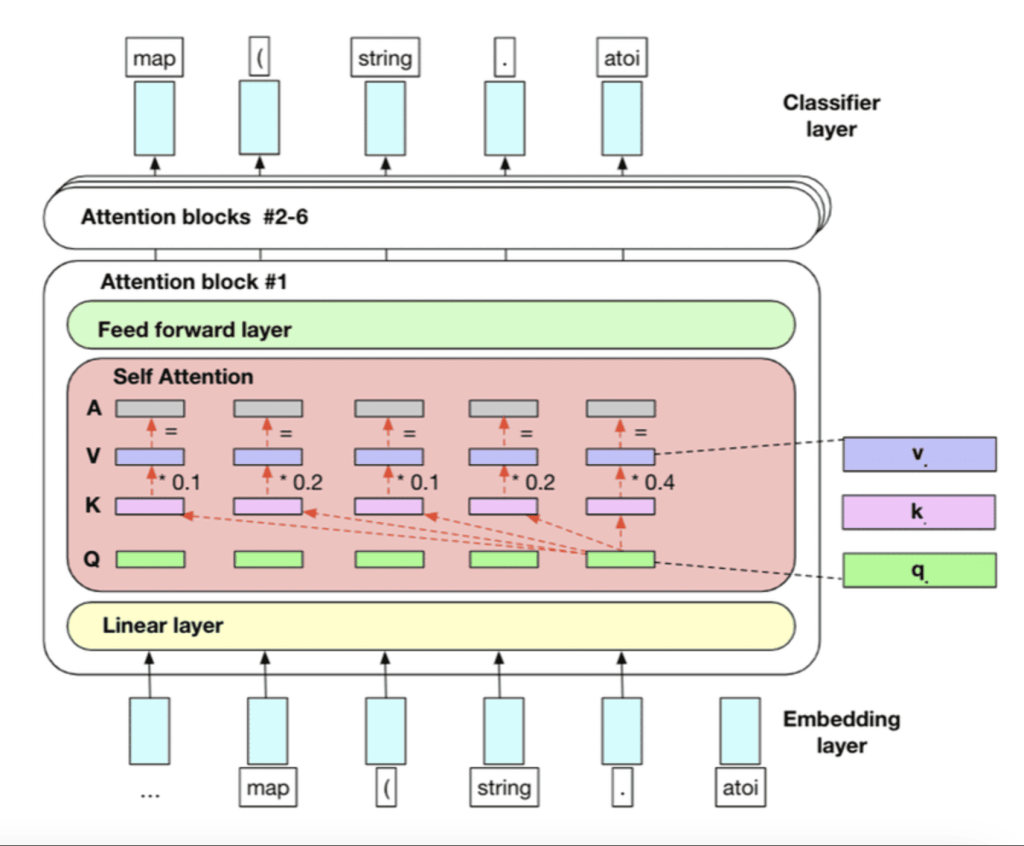

Finally, the word embeddings can be fed into the transform model properly.

GPT and Zero-Shot Tasks

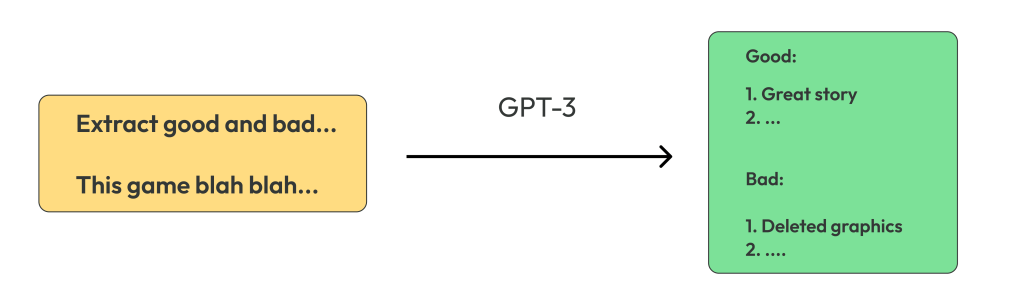

We can give you two pieces of news about GPT models: a good and a bad one. Good news: zero-shot tasks.

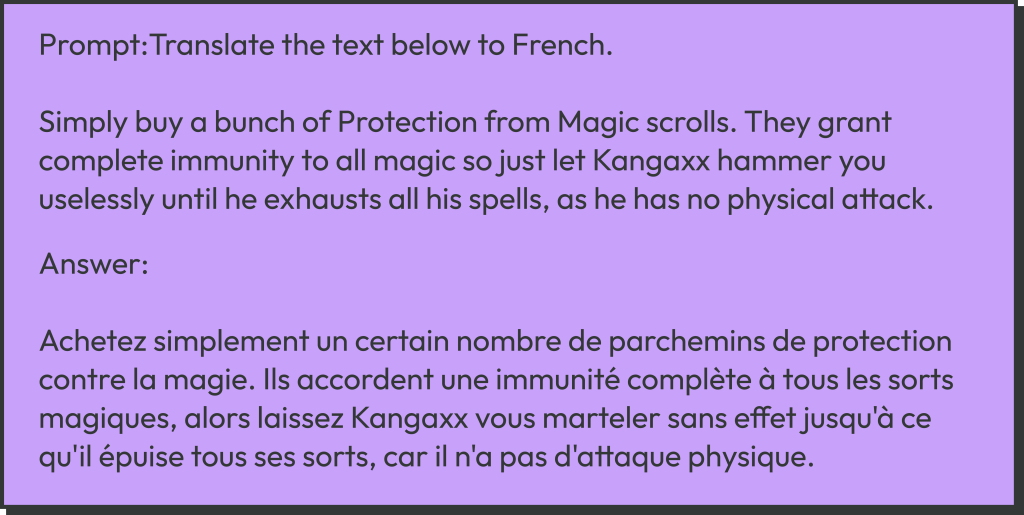

The ability of GPT models to generate text is the consequence of their being transformer decoders; other language models like BERT (a transformer encoder) aren’t able to do that. Older versions of GPT (1 and 2) could pretty much only continue the text in a meaningful way. However, modern versions can do much more than that. You can ask it, “What year was Shakespeare born?” and hopefully get an answer. Or it can process prompts like:

Or it can give you a completely different text, as GPT generation is somewhat random.

It means that GPT-3 and above can perform zero-shot NLP tasks, i.e., tasks it is not explicitly trained to perform, without any neural network training or finetuning. In many cases, it can perform on par with or even better than models specifically trained for a given narrow task (e.g., translation); this is especially true for GPT-4.

Using OpenAI GPT-3 Python API for Summarization

However, there is a price for such a power. These models are huge. Far too big to run on a single desktop or laptop machine. For this reason, and also because of secrecy, OpenAI currently does not release GPT-3 and above publicly. While you can easily download and run GPT-2 on your computer (e.g., with the Hugging Face transformers framework), you can only run GPT-3 on OpenAI servers using OpenAI API. What does it mean in practice?

- You have to pay money for each GPT-3 API call (although you get free $15 at the registration time)

- You require an internet connection, plus OpenAI servers occasionally suffer outages

- In some countries, OpenAI API is not available (though they are getting better)

If you want to use GPT-3 in your application, decide for yourself whether or not such a business model suits you.

Before we continue, please sign up for OpenAI (if you haven’t already) and try GPT-3 at the GPT-3 Playground here. Have fun with different prompts. Note that there are actually four (at the moment of writing this) different GPT-3 versions, from smallest to largest: ada, babbage, curie, davinci. Note also the two main parameters: temperature and max length. Next, go into your OpenAI personal cabinet and generate an API key. Be careful not to lose the key; save it into a text file on your computer.

This is all very nice, but how can we use such models in Python? For that, we need OpenAI Python API, which is a thin wrapper around OpenAI Web API. We can try it out in fun_opeanai.py, and we encourage you to play around with it. The API is very simple.

First, read your API key from the file (change KEY_FILE to the actual path to your file).

with open(KEY_FILE, 'r') as f: key = f.read().strip() openai.api_key = key

Warning! Never include your key in the code itself! Next, send your prompt to the OpenAI servers and wait for the answer

prompt = 'Translate this to French ...'

response = openai.Completion.create(model="text-davinci-003",

prompt=prompt, temperature=0.7, max_tokens=256)

result = response['choices'][0]['text']

print(result)

This works exactly like the GPT-3 playground you tried before. Once again, you have two main parameters:

temperature : Higher temperature means GPT-3 is more random and more creative

max_tokens : Maximal number of words the model is allowed to generate

Note that we are not doing here any tokenization or text embedding; GPT -3 does this for us on the OpenAI servers.

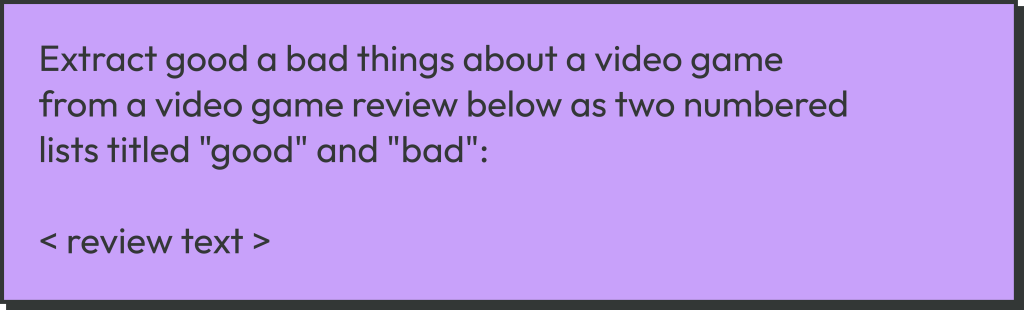

But what exactly do we want GPT-3 to do for us? We want to use it to perform a zero-shot NLP task: extract a good and bad list (separately) from every single review. This is similar to summarization but much more complicated. To do that, we use the following prompt:

GPT-3 processes our prompt

You can try to tweak this prompt if you want (after all “prompt engineering” is a hot fashion nowadays), but this one is already good enough. It produces two lists in basically 100% cases with the davinci model. Smaller models are not smart enough to follow such a prompt reliably, so we will use davinci. But now comes the bad news we promised you.

Exercise: Try different prompts with video game reviews.

Ouch: GPT Hallucinates!

When you start playing with GPT-3, you will quickly realize that, while it can perform various tasks remarkably well, it is far from perfect. While GPT-3-generated texts tend to be grammatically well-written and highly self-confident in tone, they often contain fantasies instead of facts. People say that “GPT is hallucinating” (official OpenAI terminology), or, in a less formal language, GPT-3 is a “notorious bullshitter”. By the way:

Why does GPT-3 lie? Because it does not know the truth and even doesn’t have a concept of “truth”. While LLMs are extremely good at working with text, they are trained on nothing but text and have no consistent “world model” outside the realm of text. They are super-good with text, but they don’t really think. We recommend an excellent video with Yann LeCun or this article on this topic.

Does the fact that GPT lies decrease its usefulness for applications? It depends on the application, but we would not use GPT for anything with high ethical or judicial responsibility. It might sometimes be possible to filter GPT results using various NLP techniques, but generally, it’s comparable in difficulty with the original problem we are trying to solve.

An example of hallucination can be seen with our “Extract good a bad things about …” prompt if we put the one-liner “Ruined my life.” there. The model gives something like (the actual result is random)

This is your typical GPT: When there is not enough information, it makes things up that look plausible but contain no factual truth. Moreover, as GPT-3 was trained on a huge corpus that contained video-game-related texts among other things, it can make up stuff that uses the proper terminology and superficially looks like a real thing.

Running Our Dataset through GPT-3

Or rather not the entire dataset, but a single game. The code is in pipe2_run_gpt3.py, and it’s very similar to fun_openai.py. First, we load the cleaned-and-pickled dataset from the previous step and select Divine Divinity by the ID.

df_clean = pd.read_pickle('temp/data_clean.pkl')

df_id = df_clean[df_clean['app_id'] == APP_ID]

We run over all reviews of this game, up to IDX_END=100 of them.

for idx in range(IDX_START, min(IDX_END, len(df_id))):

text = df_id.iloc[idx]['review_text']

For each text, we form a prompt and run it through GPT-3 API:

prompt = 'Extract good a bad things about a video game from a video game review below as two numbered lists titled "good" and "bad":'

prompt = prompt + '\n\n' + text

response = openai.Completion.create(model=MODEL_GPT3, prompt=prompt,

temperature=TEMPERATURE, max_tokens=256)

result = response['choices'][0]['text']

Finally, we save the result to a disk file in our temp directory:

p_out_file = p_out_dir / f'{idx:05d}.txt'

with open(p_out_file, 'w') as f:

f.write(result)

Chapter 3: NLP from Scratch: Truth/Lie Filtering after GPT-3

In this chapter, we will try in pipe3_aggregate_good_bad.py to filter the ”good” or “bad” items generated by GPT-3 to keep only truthful statements. We will also aggregate the results from all reviews into two long lists: ‘good“ and “bad”. True to our “sequential pipeline” logic, we will not try to do it on the fly but work on disk files generated at the previous step. The advantage of such an approach is obvious: you can run GPT-3 (and pay money) only once and then experiment endlessly with filtering. The filtering task itself is very similar to natural language inference: to check if a given “hypothesis” follows from the “premise”.

N-Grams from Gcratch

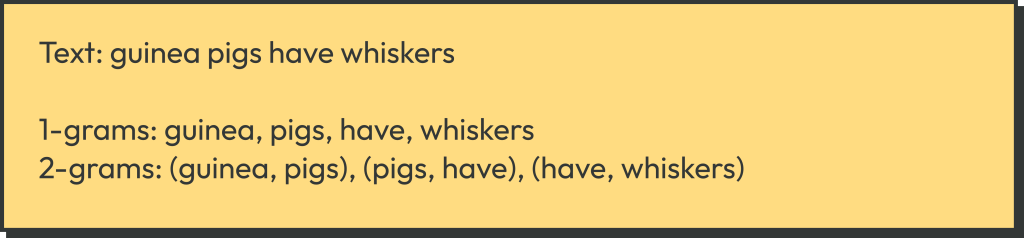

In this chapter, we will deliberately NOT use any LLMs, even moderate-sized ones like BERT or GPT-2, but instead, we will show how to write the simplest possible NLP models from scratch in Python. For this, we are going to use N-grams. An N-gram is a tuple of length N of consequent words, e.g., 1-grams (unigrams) are simply words, and 2-grams (bigrams) are word pairs. For example, let’s find all 1-grams and 2-grams in the following text:

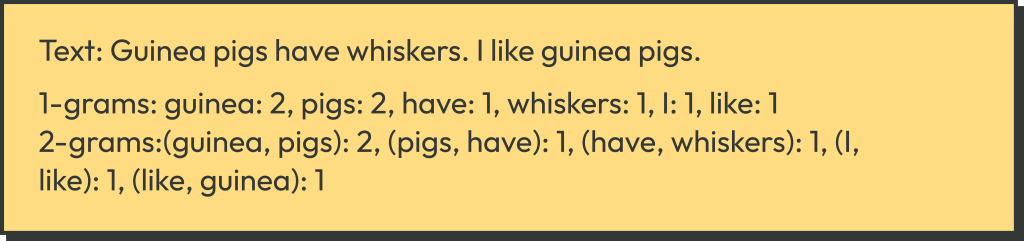

An N-gram model simply finds all N-grams in a text and builds a multiset of all N-grams and their repetitions. For 1-grams, this is known as the Bag of Words (BoW) model. N-grams are typically not allowed to cross sentence boundaries. For example:

We calculate N-grams in calculate_n_grams():

def calculate_n_grams(text: str, n: int):

"""Calculate n-grams, it's first broken into sentences"""

assert isinstance(text, str)

# Split with .!:

text = re.split('\.|\?|\!', text)

# Remove all other punctuation, numbers, junk

text = [re.sub(r'[^\w\s]', '', s.lower()) for s in text]

ngrams = set()

for line in text:

line_split = line.split()

for i in range(len(line_split) - n + 1):

ngrams.add(tuple(line_split[i: i+n]))

return ngrams

First, we split the string into a list of sentences with regular expressions. Next, we remove all non-alphabetic characters and put all words in lowercase. Finally, we find all actual N-grams. We are using Python set here, as we don’t need counts for our algorithm, but if you do, you can use collections.Counter or multiset.Multiset.

Truth/Lie Filtering using N-Grams

To filter out the lies from the GPT-3 results (created at step 2 of the pipeline), we need texts from the processed dataset (created at step 1 of the pipeline). We run a loop over all reviews:

for idx in range(len(df_id)):

p_txt = p_data_dir / f'{idx:05d}.txt'

if not p_txt.exists():

continue

# Extract good + bad from a file

list_good, list_bad = extract_good_bad(p_txt)

First, we extract two lists of strings (good and bad) in our function extract_good_bad(). This function is somewhat ugly, as the GPT output format is not perfectly stable, e.g., it can randomly put a blank line after a header “Good” or not. But ideologically, it’s pretty simple; see the code for details.

Once we have the two lists, we check each item in the “good” list for truthfulness. If true, it is added to the aggregated (over all review) list list_total_good. The same is repeated for the “bad” list. But before that, we calculate the 1-grams and 2-grams for text, the original review.

ng_t_1 = calculate_n_grams(text, 1) ng_t_2 = calculate_n_grams(text, 2) list_good = filter_irrelevant(ng_t_1, ng_t_2, list_good) list_bad = filter_irrelevant(ng_t_1, ng_t_2, list_bad) list_total_good.extend(list_good) list_total_bad.extend(list_bad)

The actual filtering takes place in filter_irrelevant()

def filter_irrelevant(t1, t2, candidates):

"""Filter a list of candidates"""

res = []

for bc in candidates:

ng_bc_1 = calculate_n_grams(bc, 1)

ng_bc_2 = calculate_n_grams(bc, 2)

if score_ngrams(t1, t2, ng_bc_1, ng_bc_2, None):

res.append(bc)

return res

For each line of the list (aka “candidate”), we calculate the N-grams of this line and score the line against the N-grams of the text (the actual review)

def score_ngrams(t1, t2, b1, b2, b_text=None):

p1 = calc_percentage(t1, b1)

p2 = calc_percentage(t2, b2)

res = p1 > 0.7 and p2 > 0.2

return res

def calc_percentage(t, b):

if len(b) == 0:

return 0

b = list(b)

f = [(x in t) for x in b]

return np.mean(f)

The idea is simple. If a short sentence is extracted from a longer text, then most of its N-grams will be present in the original text. If the sentence is hallucinated, it will contain words and 2-grams not from the original text. We take the N-grams (N=1, 2) t1, t2 of the text and b1, b2 of the good/bad list line and calculate the percentages p1, p2 of N-grams of the line present in the text. If the two numbers are sufficiently large, we accept such a line.

For example, let’s look at the review with index 2:

It’s a pretty good informative review, and GPT-3 works reasonably well. Our N-gram model accepts all lines in the list but one:

GOOD

Satisfying to come back and slaughter enemies. –

Interesting story that evolves over the course of the game. +

Requires tact and strategy. +

BAD

Stupid title. +

Very easy to kill right from the start. +

First few hours can be difficult and boring. +

The one rejected line is actually a false positive (due to the crudeness of our method and misspelled “ememies”), it should have been accepted.

The opposite example is the review with an index 0. As happens very often on Steam, the review, while rather poetic, contains no factual information about the game:

Such reviews really trigger GPT-3 into the mass-hallucination mode. However, our N-gram filter could reject 100% of the made-up lines.

GOOD

Fun and engaging gameplay –

Interesting story –

Variety of enemies –

BAD

Limited replayability –

Poor graphics –

Unbalanced difficulty –

Our N-gram model actually works quite well for such a trivial model. And its faults are quite predictable: it doesn’t handle synonyms, word endings (come vs. coming) and misspelled words (“ememies”). Of course, in the decades of NLP history, much better models were created.

If you want to use traditional (i.e., without deep learning) NLP methods in real projects, don’t implement them from scratch! Instead, use one of the existing Python libraries, such as NLTK, spaCy, or GenSim.

Chapter 4: Extractive Text Summarization with BERT-Extractive-Summarizer

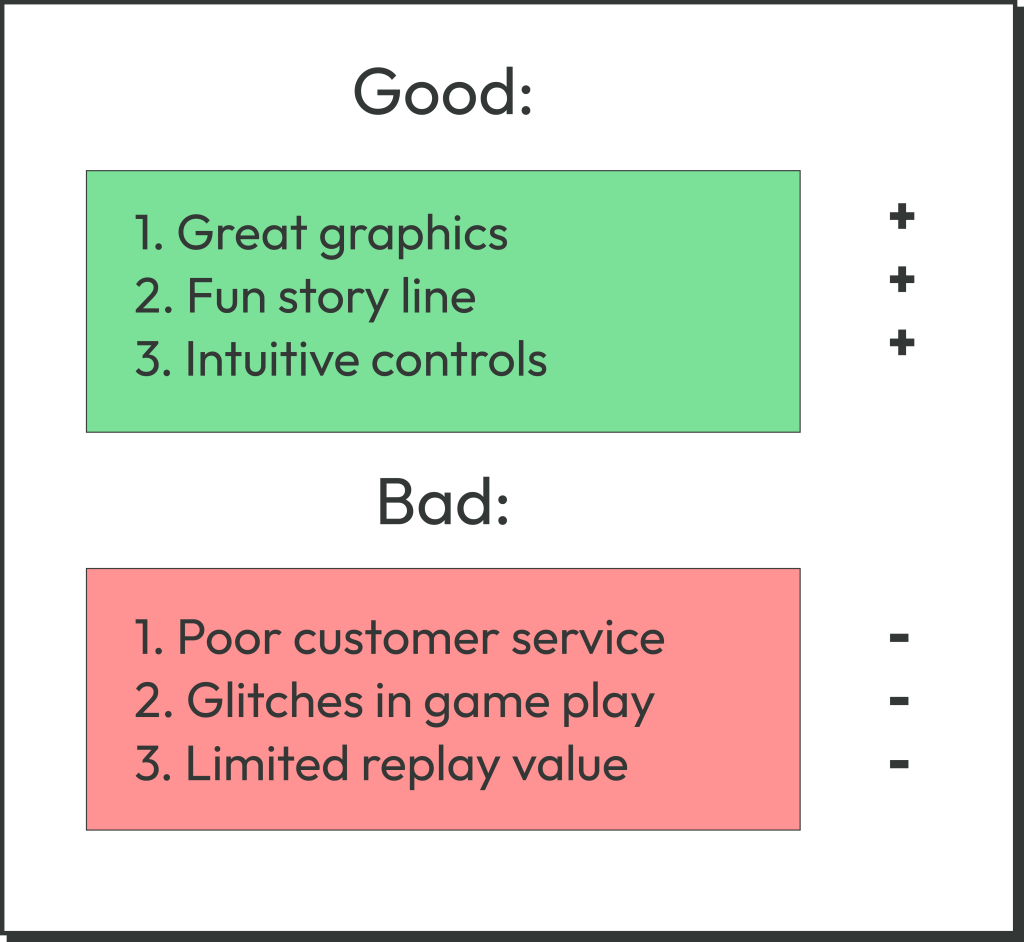

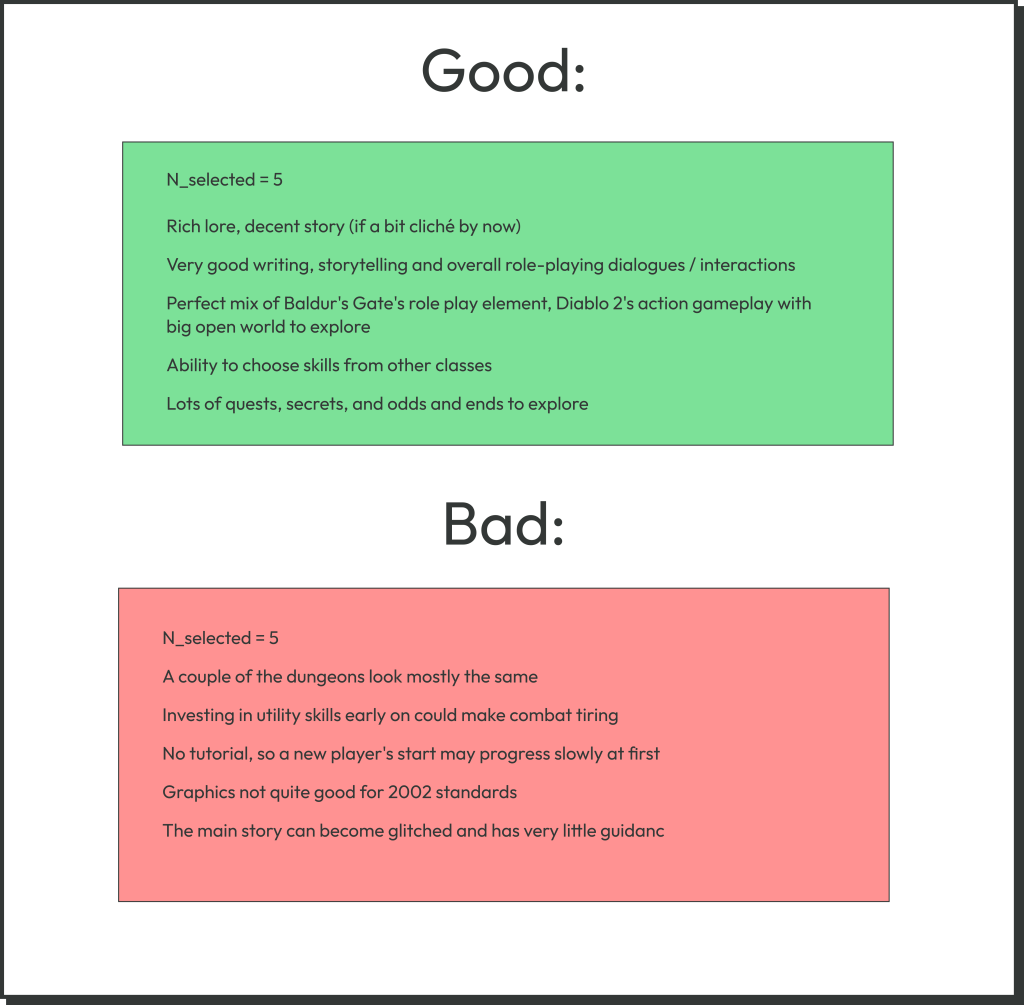

After the previous stage of the pipeline, we have two filtered lists: GOOD and BAD, aggregated over all reviews (of one game) and saved as two disk files. Now we want to summarize each list by selecting five “most typical” lines from each.

Extractive vs. Abstractive Text Summarization in Python

There are two main ways to summarize a text. Extractive summarization selects the most representative bits of the original text verbatim. It usually works on the level of whole sentences.

In contrast, abstractive summarization uses generative models (typically LLM-based) to generate a free-form summary from the original text. The Python framework transformers from Hugging Face has a number of such models. We (in IT-JIM) have tried such models on both individual video game reviews and aggregated lists and found the results unsatisfactory. In particular, just like GPT, abstractive summarization models are very prone to hallucinations, especially when the input text is too short or not very informative.

In this section, we use extractive summarization instead, using the library bert-extractive-summarizer.

Extractive Summarization with Bert-Extractive-Summarizer

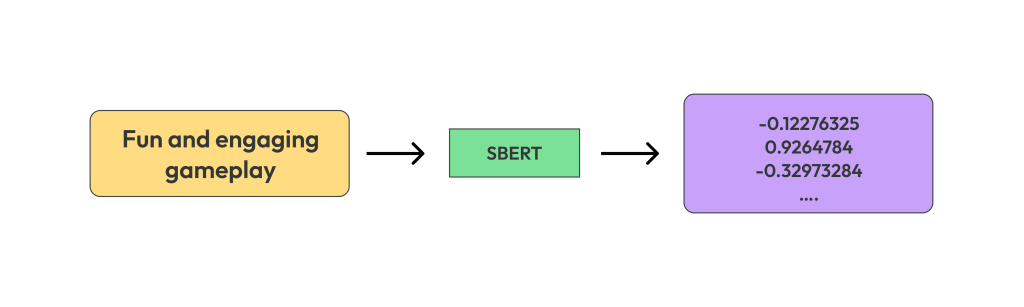

This library uses the Sentence BERT model from the library sentence-transformers under the hood. The model is automatically downloaded from the hub and cached. The idea is the following. Sentence BERT processes a sentence and produces a multi-dimensional numerical embedding vector. The more similar in meaning the sentences are, the closer are their embeddings (via cosine similarity).

bert-extractive-summarizer can also use BERT models from the transformers library. BERT produces and embedding of each word (instead of the sentence), which are later averaged to get a sentence embedding.

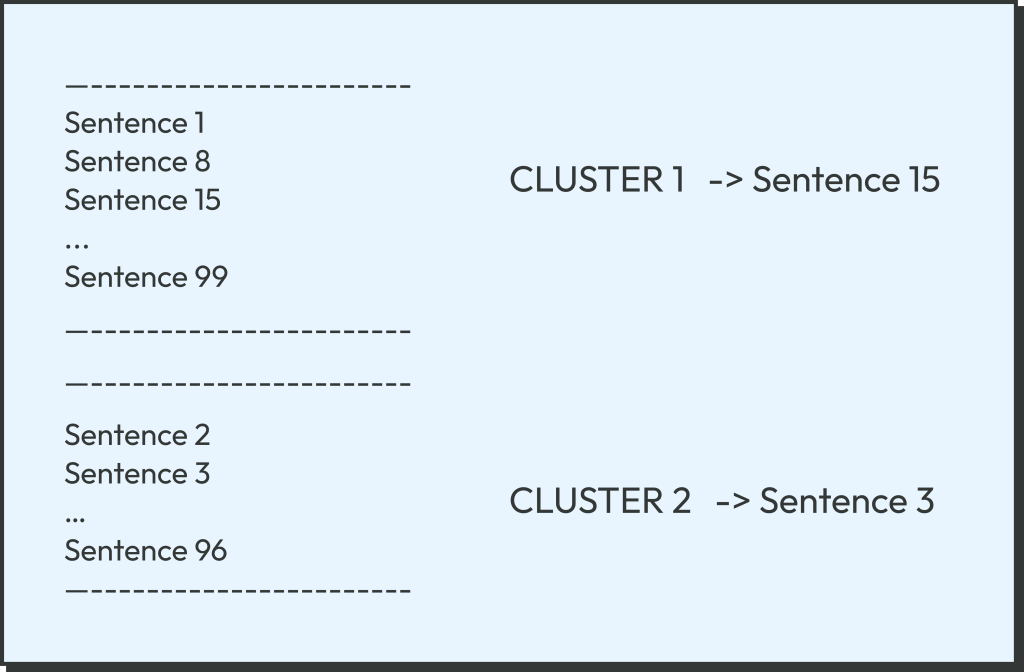

After we get the sentence embedding (numerical vectors), the library clusters these embeddings by similarity, using a variation of the K-means clustering.

Finally, it selects one most representative sentence from each cluster, i.e., the one closest to the cluster center.

In the last part of our code, pipe4_cluster_good_bad.py, we read the good list (the bad list is processed later in the same way) and run it through bert-extractive-summarizer. But first, we have to convert the list into a single long string separated by the period (“.”) characters while removing any period characters inside the list items themselves.

lines = [line[:-1] if line[-1] == '.' else line for line in lines]

lines = [line.replace('.', ',') + '.' for line in lines]

joined_lines = ' '.join(lines)

Next, we create an SBertSummarizer model (based on SBERT) and run our text through it:

model = summarizer.sbert.SBertSummarizer() result = model(joined_lines, num_sentences=5)

This is it! The result contains five lines, as requested. This is the final result of our entire pipeline. For us, it looks like this:

This is the end. We hope we were able to interest you in NLP and not scare you away with too many technical details.