WebAR Development and Deployment: Cloud-Based or Serverless?

Enhancing the physical world with virtual content, connecting real life with the digital world, and making that interaction an immersive experience are the reasons for many businesses to turn to extensive usage of augmented reality (AR). In many cases, however, installation of a specific mobile application is required. Would it not be easier and less time-consuming for a user to have AR directly in a browser? So-called WebAR provides instant immersion.

Computer Vision Solutions for Marker-Based Augmented Reality

So we want to run AR applications directly on the web and overlay virtual objects over the real ones which are called markers. Let’s skip the “web” part for now and quickly walk through the main stages of marker-based AR.

In order to render AR models correctly over the frames from the camera, we need to estimate its position. In the case of marker-based AR, the planar marker position in the frame should be known. We, thus, start with marker detection and once the marker is found, we track its position in consequent video frames. The marker position in the frame is used to calculate the homography transformation matrix and estimate 6 degrees of freedom (6 DoF) camera position from it. With this info, we accurately render 3D models.

We have already covered marker-based AR with much more details in another blog post and described an advanced approach for image tracking used for AR applications in the research section of the website.

Let’s now focus on the practical aspects of integration of computer vision algorithms and consider two conceptually different architectures that we implemented in our WebAR project:

- Server-based architecture with the main computations in the cloud

- Completely front-end (serverless) solution that executes algorithms directly on a user device.

Is one preferable over another? Let’s dive into details and find out.

Server-Based Architecture for AR

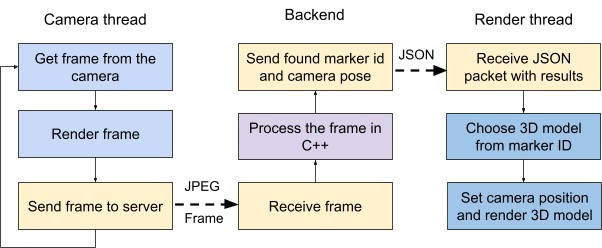

The realization with the cloud was divided into 2 separate asynchronous frontend threads and server work. Camera thread shows live-video stream from the device camera and sends jpeg to the backend. It processes a given frame and provides the id of found marker and camera pose in JSON format to the render thread. The latter one chooses a respective 3D model for found id and renders it over the real frames by Three.JS lib. The high-level logic of this pipeline is presented in Fig. 1.

Fig.1. Server-based architecture

As a server, we used Amazon Web Services (AWS) instances. The computational power of a standard general-purpose server is enough to do the processing faster than in real-time.

However, bad quality or stability of the internet connection along with the huge distance between a user and a server lead to severe network latency and delays between threads on the front end.

Serverless Architecture for AR

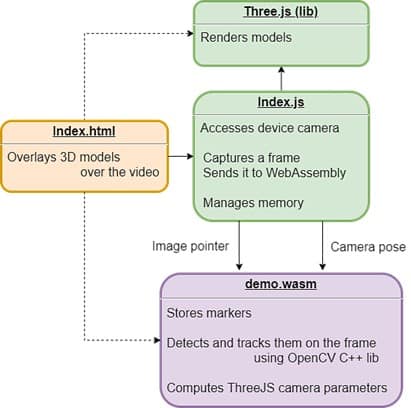

To avoid dependence on internet connection and potential lags, we introduced a front-end-only solution with the whole WebAR pipeline running on the user device. While the primary logic of the previous architecture remained unchanged, in a serverless scenario a user now has to download all files before starting the application.

We modified and recompiled the C++ code to the WebAssembly binary code using Emscripten SDK to run it directly in the browser. This SDK is a suitable tool to call C++ functions from the JavaScript side and, additionally, speeds up the procedures. When moving to the device, we had to accelerate computer vision algorithms extensively as they are time-consuming and due to the security limitations of the web technologies. We managed to optimize them and build a real-time robust AR engine.

Fig.2. Serverless architecture

Let’s sum up the advantages and drawbacks of each architecture:

| Server-based architecture | Serverless architecture | ||

| + | – | + | – |

| 1. Provides better performance and allows to run heavy algorithms

2. Supports weak devices |

1. Requires a reliable connection

2. Costly in multiuser usage scenarios 3. Network latency |

1. Works without network after loading

2. Cheaper for business tasks 3. No network lags |

1. Requires optimization of algorithms |

Summary

Both server-based and serverless architectures are suitable for specific computer vision tasks. The server is an indispensable part of non-real-time applications that require huge computing power, e.g. CNN for object recognition or segmentation. On the other hand, pure frontend is a ‘must-have’ architecture for real-time applications.

Got interested? Check our research paper on AR in Web for more information.