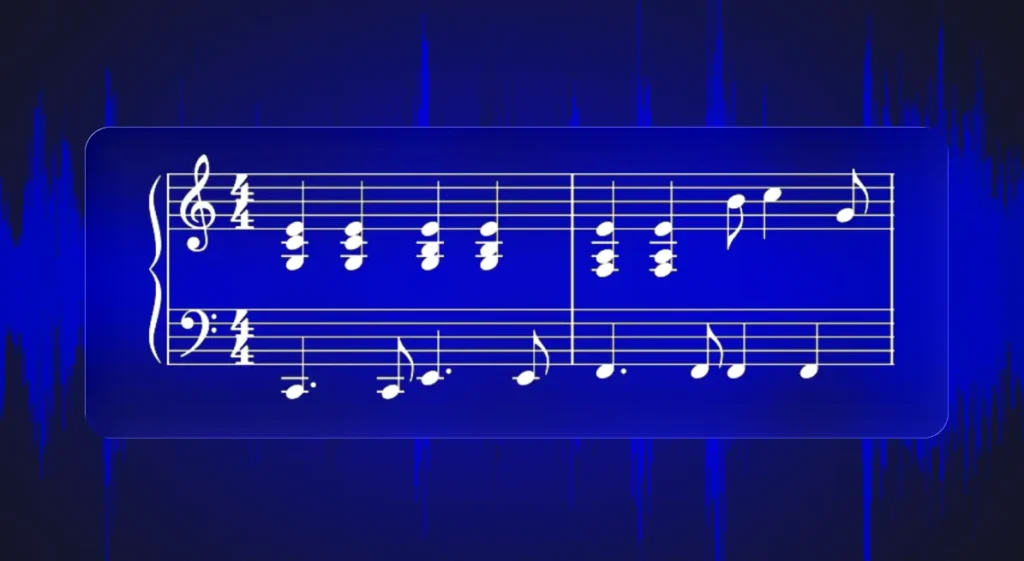

Best Open-Source AI Music Generator 2026

Is there an open-source alternative to Suno in 2026? Every couple of weeks, another open-source ai music generator model launches with a headline claiming Suno...

Is there an open-source alternative to Suno in 2026? Every couple of weeks, another open-source ai music generator model launches with a headline claiming Suno...

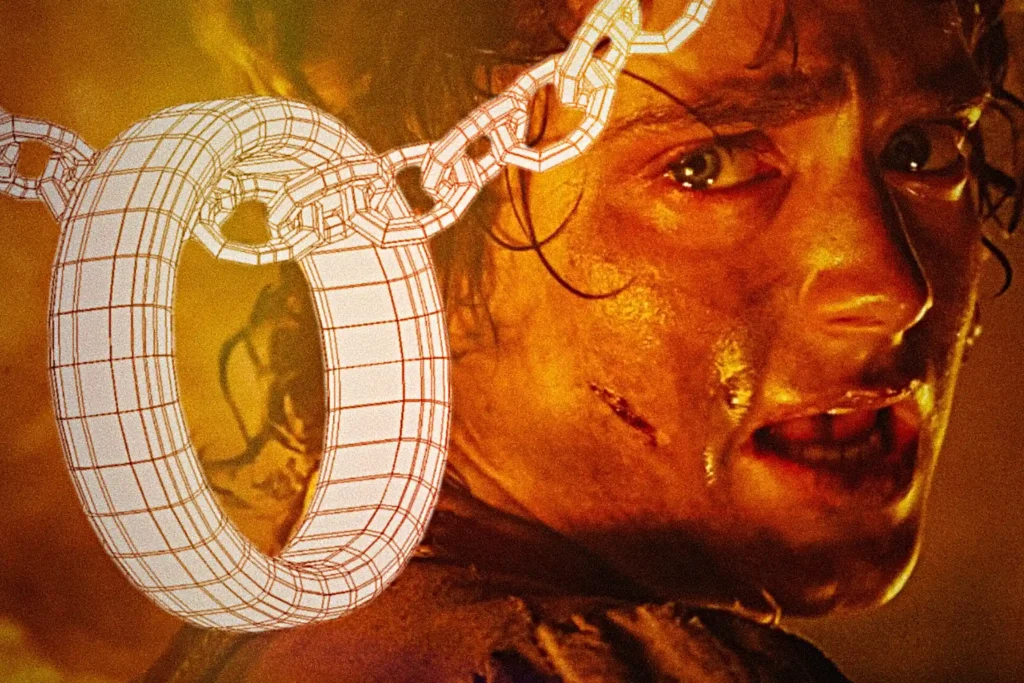

Today, there is almost no digital field where 3D assets haven't found their place: CGI, games, VR/AR, physics simulations, fashion design, marketplace product renders -...

The Moment Audio Caught Up with Vision If you've ever tapped on a person in an Instagram photo and watched them lift cleanly from the...

Human pose estimation has emerged as a cornerstone technology in next-gen fitness and video analysis applications. For sports startups and developers building AI-powered coaching or...

Wearable fitness trackers and smartwatches have become standard gear for athletes, collecting metrics like heart rate, speed, and distance. However, these devices often fail to...

AI Music Technology Democratizes Creativity In 2020, Billie Eilish swept the Grammys: Album of the Year, Record of the Year, Song of the Year, Best...

AI Music Tech is Booming AI music technology is becoming a critical layer in modern digital products. Music education platforms, creative software, rights management systems,...

Segmentation has quietly become one of the most useful tools in modern tech. Whether it's helping doctors analyze medical images, powering AR effects, tracking objects,...

Colors are Clearer Than Ever Before: Constant Color API Review The human visual system adapts to a wide range of lighting conditions, from warm sunlight...