Apple RoomPlan API Integration for Innovative AR Apps

How to Integrate Apple’s RoomPlan API into Your iOS App: A Comprehensive Guide

Creating a 3D room model has historically been a lengthy, costly, and error-prone process. Real estate managers wasted hours and money hiring experts to make floor plans.

But here’s what changed everything: Apple introduced the RoomPlan API.

Now, users can visualize rooms using their iOS mobile devices in just minutes with incredible detail. AR app developers, proptech startups, interior design platforms, e-commerce, and real estate professionals can benefit from the RoomPlan API.

“When comparing scan dimensions to actual measurements using Apple’s RoomPlan API, they turned out to be accurate enough, with an error usually staying below 5%. This level of precision makes it viable for many professional applications, from interior design to real estate documentation.”

– Oleg Ponomaryov, CTO at It-Jim

This guide walks you through everything you need to know about integrating Apple’s RoomPlan API into your iOS app, namely:

- What is Apple RoomPlan, and how does it work?

- How to integrate Apple’s RoomPlan API into your iOS app.

- Measurable benefits from the RoomPlan API integration.

- Overcoming RoomPlan API limitations with proven workarounds.

- Advanced use cases powered by It-Jim.

Let’s start by understanding how the Apple RoomPlan API works and its core properties.

What is the Apple RoomPlan API & Its Workflow?

Apple’s RoomPlan API is a framework that uses augmented reality (AR) and the LiDAR Scanner on iPhone and iPad to create 3D models of indoor spaces. This is part of ARKit for building AR apps and using the RoomPlan API.

LiDAR stands for Light Detection and Ranging and uses laser light to measure distances. The technology sends out beams and checks their reflections. RoomPlan API in iOS creates a parametric model that shows the positions and sizes of walls, doors, windows, furniture, and other appliances.

The RoomPlan functionality facilitates automatic object recognition, real-time 3D model reconstruction, and enables easy exports.

Here is a list of potential use cases of an AR app containing the Apple RoomPlan API:

- Real estate: create virtual tours of properties and provide accurate floor plans.

- Architecture: preview and change room layouts in real-time for faster design decisions.

- Interior design: visualize how furniture fits in a room and plan renovations.

- Facility management & Logistics: plan office layouts or maintenance paths, inventory space usage in commercial buildings

- Home repair: estimate material needs for renovating projects and visualize the results.

- Accessibility: help assess room layouts for mobility aids (e.g., wheelchairs), simulate navigation paths for accessible design compliance

- Furniture retail: allows customers to visualize furniture in their homes.

- Marketing: create engaging advertisements or digital promotions.

- Insurance: provide accurate documentation of property layouts and valuable items used for insurance underwriting or claims processing.

Also, RoomPlan’s ability to generate accurate 3D models of indoor spaces makes it well-suited for emergency planning, evacuation modeling, and risk assessment in occupational safety contexts.

Thinking about building a custom AR app and integrating Apple’s RoomPlan API for your business?

Whether you’re building AR apps, using the RoomPlan API to visualize spaces, or creating digital property twins, unlock faster, more innovative development. We don’t just use RoomPlan API – we help enhance it with computer vision services. Reach out to ask questions and receive advice.

Why Apple’s RoomPlan Outperforms Traditional Methods

RoomPlan API in iOS outperforms older methods, such as Scene Reconstruction and manual CAD modeling. It offers faster results, better accuracy, and greater accessibility, all from one mobile device. Traditional 3D scanning produces unstructured point clouds or meshes.

In contrast, RoomPlan API generates a semantic understanding of interior spaces. Instead of just capturing shapes, it identifies and categorizes room elements. The API produces this comprehensive room data within minutes.

Additionally, previous methods required extensive technical expertise, specialized equipment, and considerable post-processing time.

How Does the Apple RoomPlan API Work?

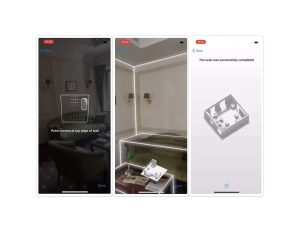

The process for using the RoomPlan API is straightforward: launch the app, follow the steps to scan the room, and review the results shortly thereafter. You can access and edit the 3D room model anytime.

So, how does Apple RoomPlan API work from a technical perspective?

In brief, the API workflow consists of these three main steps:

1. Scanning

The RoomPlan API uses the device’s camera and LiDAR scanner. It captures the environment and identifies key features: walls, windows, doors, and openings.

2. ML Processing

Sophisticated ML algorithms analyze the captured data to identify room features and create a 3D model of the room.

3. 3D Output

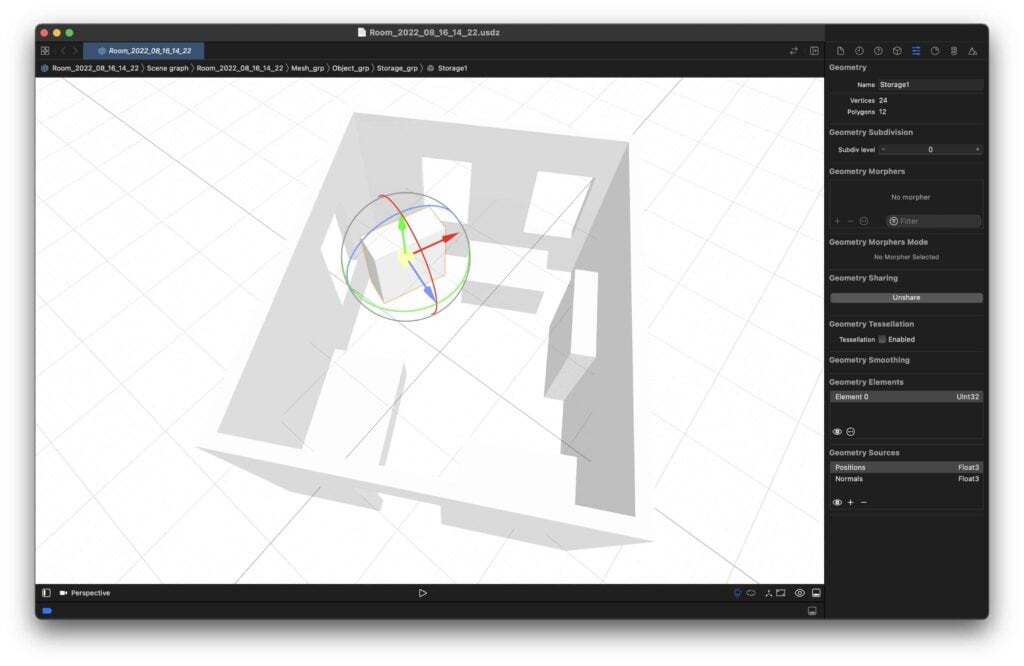

The RoomPlan API in iOS gives results as parametric data. You can export this data in different Universal Scene Description (USD) formats. This property enables developers to easily add 3D models to their apps.

USD is a typical format for AR-based projects. You can edit these files later in tools like AutoCAD, Shapr3D, or Cinema 4D if needed.

RoomPlan API: Data Structure Overview

Apple’s RoomPlan can recognize the following aspects of the captured room:

- Structural elements: walls, doors, windows, openings.

- Furniture: chairs, tables, sofas, beds, storage units.

- Room boundaries: floor plans, room dimensions.

- Spatial relationships: object positioning and room layout connections.

Now, let’s see what data is contained in the 3D model produced by the technology. RoomPlan organizes scanned information into two primary categories: surfaces and objects.

4 Surface Types & Their Data Properties

First, let’s elaborate on surface types, properties, and applicable metrics. RoomPlan identifies four distinct types of surfaces that define a room’s structural boundaries:

- Wall – primary structural boundary.

- Door – entry and exit points.

- Window – light sources and viewing areas.

- Opening – passages without doors.

| Surface Type | Description | Detection Capability | Relevance |

| Walls | Vertical structural boundaries | Precise positioning and dimensions | Structural layout for AR navigation and space planning |

| Opennings | Doors, windows, passages | Type identification and measurements | Traffic flow analysis and accessibility planning

Useful for layout planning, renovation |

| Floor | Horizontal base surface | Area calculation and boundaries | Foundation for furniture placement and room use |

| Furniture | Moveable and built-in objects | Category, size, and spatial relationships | Object interaction and interior design applications |

Each of these surfaces contains a standardized set of six data properties.

| Data Property | Description | Data Type | Notes |

| Confidence | Detection reliability | Discrete (Low/Medium/High) | Indicates scan quality |

| Dimensions | Size measurements | Width × Height | Depth always equals 0 (no thickness) |

| Transform | Position and orientation | 4×4 matrix | Standard transformation matrix |

| Normal | Surface direction | 3D vector | Perpendicular to the surface plane |

| Curve | Surface curvature | Variable/nil | Nil for flat surfaces |

| Completed edges | Scan completion status | Array | Tracks user scanning progress |

15+ Objects & Data Properties

Apple RoomPlan API can detect a variety of objects, namely:

- Furniture: bed, chair, sofa, table.

- Kitchen appliances: dishwasher, oven, refrigerator, sink, stove.

- Bathroom objects: bathtub, toilet, washer, dryer.

- Other: fireplace, stairs, storage, television.

Objects share similar properties to surfaces but with key differences.

Property values include confidence, dimensions, and transform.

- 3D Dimensions: Full width × height × depth values.

- Oriented Bounding Boxes: Match object orientation, not world coordinates.

- Spatial Relationships: Position relative to walls and other objects.

Dimensions and the transform metric define a bounding box around an object. The bounding box isn’t aligned with the axes. Instead, it matches the object’s orientation, not the world coordinate axes.

| Data Property | Description | Object vs. Surface Difference |

| Confidence | Detection reliability | Same discrete values (Low/Medium/High) |

| Dimensions | Size measurements | 3D values (width × height × depth) |

| Transform | Position and orientation | Defines a non-axis-aligned bounding box |

As understood, RoomPlan API can detect and visualize many elements. Additionally, it cannot simply be visualized as plain boxes; however, you can replace them with real furniture models to make the scan more detailed and valuable.

This structured method makes RoomPlan data ideal for creating top-notch AR apps, architectural analysis, and automated space planning.

Want to explore how the RoomPlan API can transform your project?

Let’s build a solution that goes beyond the RoomPlan API’s standard features and addresses its limitations. This custom solution creation uses an advanced computer vision algorithm. It enhances object recognition and layout accuracy, especially in cluttered or irregular rooms.

Getting Started with the Apple RoomPlan API: Tech Perspective

Developers can seamlessly use the RoomPlan API in their iOS apps using one of two approaches:

- Basic integration: add a RoomPlan API with minimal effort and without customization. Users will interact with the built-in solution experience only.

- Advanced integration: gain complete control over scanning parameters and real-time data processing. From this perspective, users can build detailed room plans and edit specific elements as needed.

Thus, the easiest way to integrate Apple’s RoomPlan API into your iOS app is by using the default RoomCaptureView in Storyboard.

RoomPlan follows a clear component hierarchy:

The most straightforward integration uses the default RoomCaptureView for Storyboard.

- The RoomCaptureView handles all visualizations and interactions with the end user.

- A user scans the room with RoomCaptureSession, accessed via the corresponding view’s property.

- The RoomCaptureSession itself utilizes the standard ARSession from ARKit.

You might also find it interesting to read:

SDK for Augmented Reality ApplicationsHow Do AR Solutions Benefit from RoomPlan API?

When building AR apps and using the RoomPlan API, focus on how to streamline your business processes.

According to Statista, revenue in the AR&VR market is expected to reach $46.6 billion in 2025. Companies are investing in AR technology because customers want to receive immersive and interactive experiences in their services.

Integrating Apple RoomPlan into your app improves development and user experience in several key ways:

- Reduce software development time by more than 50% with this unique iOS technology.

- Generate 3D floor plans in under 2 minutes.

- Offer intuitive room capture in real estate, interior design, or AR apps.

- Cut the need for specialized 3D modeling expertise on your team.

“RoomPlan integration cuts our MVP development cycle from 4 months to 6 weeks. We could focus on user experience and advanced functionality instead of building a scanning solution from scratch.”

– Yurij Gapon, Head of iOS at It-Jim

We can help you test the RoomPlan API integration in your project and ensure accuracy in real-world conditions, such as varying lighting and complex furniture setups.

![]()

You can also discover our

3D Computer Vision Services

The true strength of using the Apple RoomPlan API is not only in its scanning features. It also provides valuable business insights.

Here’s how RoomPlan translates technical capabilities into competitive advantages:

1. Faster Time to Market

Scan-based 3D room models eliminate the need for manual drawing, significantly speeding up product release timelines.

Teams can now iterate and deploy features in just days. There is no need to wait weeks for professional architectural drawings. There is no need to use CAD programs and similar tools and to hire an external expert for measurements.

2. Improved App Experience

Integrating RoomPlan into your iOS app offers an immersive AR experience, enabling engaging and personalized interactions. This creates engaging, personalized experiences. They feel real and fit into the actual environment, not just a theoretical space.

Users interact with real-world spatial data instead of static, generic layouts. Planning renovations, arranging furniture, or evaluating properties all become easier and more intuitive.

3. Optimized Processes

RoomPlan API streamlines floor plan creation by automating the process, reducing manual effort, and minimizing errors. It’s a valuable tool for professionals who need fast, reliable, and accurate results.

4. Data-Rich Outputs

RoomPlan output provides detailed object metadata, including:

- Type classification.

- Precise positioning.

- Accurate dimensions.

- Spatial relationships.

This structured data is directly integrated into analytics pipelines, AI training datasets, or modeling applications. There’s no need for extra processing.

5. Ready-to-Export Formats

The API provides seamless workflow integration with various export options:

- USDZ files for AR apps.

- Structured JSON for BIM/CAD tools.

- Standard formats for cross-platform use.

Building AR Apps with Apple RoomPlan: It-Jim Experience

Our team has rolled out the RoomPlan API in various industries. This adoption change enables businesses to tackle spatial computing challenges in innovative ways.

Here are proven applications that we helped to implement:

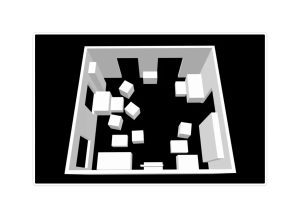

Architecture: 3D Floor Plan Layout

Project Focus: The goal was to create precise 2D floor plans and 3D layouts of real spaces with actual dimensions.

Solutions: A system captures spatial data from iPhone LiDAR scans. Then, it creates 3D models and scaled 2D floor plans.

Result: A mobile app turns spaces into digital layouts in minutes. This outcome saves architects and real estate professionals a whole day of manual work.

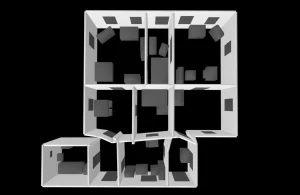

Solution for Real Estate

Challenge: Our solution creates 3D room models to facilitate the buying and selling of real estate. A client requested that our team enhance their prototype, which was created using the Apple RoomPlan API. They also requested support to improve app functionality.

Solution: We developed a tool that creates 3D room models to help with buying and selling real estate properties.

Furniture Fitting AR App

Challenge: Customers struggled to see how furniture would look in their own spaces. This aspect caused high return rates and unhappy customers.

Solution: We developed an AR app using the Apple RoomPlan API, which enables users to place furniture in real-time with precise spatial awareness. Users scan their room once, then virtually place items with confidence in scale and fit.

“After It-Jim added RoomPlan to our property management platform, we cut manual surveying costs by 70% and improved accuracy. Property listings now include interactive 3D models generated in minutes, not days.”

– Feedback from our client.

Therefore, RoomPlan API integration makes a solid investment. The technology offers businesses chances to stand out. It helps them create a better user experience and visual materials with less effort.

Have an AR-based project concept in mind and want to use the RoomPlan API?

Let’s build it together with Apple RoomPlan technology and advanced computer vision expertise. We help you turn powerful technology into real-world solutions that deliver results with a proven track record of implementing the RoomPlan API in iOS apps across diverse industries.

Book a call to discuss the implementation strategy.

Overcoming Apple’s RoomPlan API Limitations

Note that RoomPlan is still a new API. Some things may change, and issues might get fixed in future updates.

However, RoomPlan delivers useful but not perfectly accurate results. Measurements and object positions may have minor errors, which is excellent for quick scans but not reliable for precision-oriented tasks.

Since our team has had an immersive experience with the Roomplan API, we’ve identified its key limitations and know how to work around them.

![]()

How we work with existing API limitations, read also:

RoomPlan is Awful, and it’s Great!

For example, the RoomPlan framework has significant constraints, including the requirement for rectangular simplifications. The system attempts to reduce all objects and surfaces to a set of rectangles.

Additionally, the technology does not capture data from ceilings or skylights.

The current version of the RoomPlan API in iOS has several constraints, such as:

- Limited object recognition – detects only a fixed set of common household items (e.g., chairs, tables, sofas). It does not identify less typical objects, such as water boilers or industrial equipment.

- Struggles with multiple or large rooms – not designed for scanning numerous or very large spaces in one go. Apple recommends a maximum of about 9×9 m (30×30 ft). Longer scans degrade tracking accuracy, risk overheating, and may lead to drift.

- Measurement Errors – shows measurement drift—errors up to ±5 cm per wall.

- Incorrect Wall Thickness – models all walls as a uniform thickness of around 16 cm, regardless of real measurements. Exterior walls are always that thin; structures over ~50 cm break into two separate thin walls.

- Door & Window Flaws – merge double doors or door-window combinations incorrectly.

- Mirrored Surface Issues – large mirrors and mirrored wardrobes can confuse LiDAR, leading to missing geometry or phantom objects.

- Surface shape limitations – assume surfaces are rectangular or slightly curved, so it misrepresents angled walls, arched openings, or detailed trim.

- Phantom (ghost) geometry – occasionally “sees” surfaces or objects that don’t exist; LiDAR noise can lead to phantom walls or objects.

- No Ceiling or skylight capture – does not capture ceilings or skylights, making it unsuitable for tasks requiring lighting design or accurate volume measurements.

While powerful, RoomPlan isn’t perfect in every environment. With years of vision-based R&D, we know how to work with these and other limitations.

Apple continuously improves RoomPlan API capabilities with each iOS platform update. Our AI iOS development services and approach account for this evolution.

“We are leveraging new RoomPlan features as they’re released. Our clients enjoy Apple’s upgrades while keeping current features intact. This is the benefit of working with a team that knows the framework’s roadmap and even beyond.”

– Yurij Gapon, Head of iOS at It-Jim

Conclusion: Is Apple’s RoomPlan API Right for Your Project?

Apple’s RoomPlan API simplifies the creation of accurate 3D room models. You can use it with a LiDAR-enabled iPhone or iPad.

It simplifies floor plan creation, reduces errors, and enhances app features across various industries, namely:

- Real Estate: virtual property tours and instant floor plan generation.

- Interior Design: AR-powered furniture placement, estimating materials, and space planning.

- Retail: store layout optimization and virtual showrooms.

- Property Management: digital twin creation and facility maintenance.

- Architecture: rapid as-built documentation and renovation planning.

- and much more.

Its ability to quickly generate accurate room layouts also makes it a valuable asset for broader AR applications. The technology is excellent for:

- Fast residential room scans within ~9×9 m.

- Quick, parametric 3D models and object layouts.

What’s Next: The RoomPlan API is still new, but updates will improve its accuracy and stability.

In the future, we can look forward to improvements such as support for non-rectangular surfaces, scanning multiple rooms, and better detection of floors and ceilings. These upgrades will expand the capabilities of what we can achieve with spatial understanding in AR.

Please note: The technology is not suitable for high-precision needs, complex structural analysis, industrial settings, or multi-floor scanning.

Ready to advance your business with the RoomPlan API and computer vision?

We help you benefit from the RoomPlan API. We do this by testing, customizing, and integrating it into your iOS app. Contact our team to share your needs and find out how we can speed up your RoomPlan implementation.